Training a large language model today isn’t just hard-it’s expensive. We’re talking about budgets that rival small country defense programs. GPT-4 reportedly cost over $100 million to train. Gemini Ultra? Closer to $191 million. Meanwhile, the original Transformer model from 2017 cost about $900. That’s not progress-it’s a financial explosion. And here’s the twist: most of that money isn’t going into better performance. It’s going into oversized models trained on too little data.

Why Bigger Isn’t Always Better

For years, the AI industry chased bigger models. More parameters. More layers. More neurons. The logic was simple: bigger model = better results. But research from 2022 turned that idea upside down. A team trained over 400 language models under fixed compute budgets and found something shocking: when you double the size of your model, you should also double the amount of training data. Not triple. Not quadruple. Double. This is called the compute-optimal scaling law. And it means models like Gopher (280 billion parameters) trained on 1.4 trillion tokens were wasting half their potential. Enter Chinchilla: same total compute budget, but only 70 billion parameters and 1.4 trillion tokens-four times more data than Gopher. Chinchilla beat Gopher, GPT-3, and even Megatron-Turing NLG on nearly every benchmark. All while using less than one-third of the parameters. The lesson? You don’t need a 500-billion-parameter monster to get top-tier performance. You need the right balance between model size and data volume. Most teams still ignore this. They spend $50 million training a giant model on 300 billion tokens-when they could’ve trained three smaller, better-trained models for $15 million and outperformed the giant.The Hidden Cost: Inference Isn’t Free

Training is just the beginning. Once your model is done, you still have to run it. Every time someone asks ChatGPT a question, every time a customer service bot replies, every time an AI writes a report-that’s inference. And it adds up fast. A 100-billion-parameter model deployed at scale can cost between $50,000 and $500,000 per year just to host. That’s not a one-time fee. It’s ongoing. If your model is used 10 million times a month, you’re paying for compute every second. And guess what? Bigger models use more power during inference. A 70-billion-parameter model might need 4x the memory and 3x the latency of a 7-billion one. So if you trained a huge model to save on training costs, you might end up paying more in the long run. This is the trade-off no one talks about: do you spend more upfront to train a small, efficient model? Or spend less on training and more on serving it forever? The answer isn’t obvious. But Chinchilla gives us a hint: a smaller, well-trained model often needs less inference power because it’s more precise. It doesn’t have to guess as much. It doesn’t need as many retries. It’s smarter, not bigger.

How to Actually Save Money

Here’s what works in practice, based on real results from labs and startups:- Use pre-trained models-Meta’s LLaMA 2, Mistral, and DeepSeek R1 are free to use. You don’t need to train from scratch. Fine-tuning a 70B model costs maybe $20,000. Training one from zero? $10 million.

- Scale data and model together-If you’re training a 13B model, aim for 500+ billion tokens. Not 100 billion. Not 200 billion. At least 500. That’s the sweet spot.

- Use mixed-precision training-FP16 or BF16 instead of FP32. Reduces memory use by half. NVIDIA’s H100s handle this natively. You’ll train faster and cheaper.

- Shard your model-Tools like DeepSpeed and FSDP split the model across GPUs. Instead of needing 100 H100s, you might get by with 60. That’s a $1 million savings on hardware rental.

- Quantize after training-Convert weights from 16-bit to 8-bit or even 4-bit. You lose almost no accuracy but cut inference costs by 60%. Many production systems do this.

- Use prompt engineering-Sometimes, a well-crafted prompt can replace a fine-tuned model entirely. No training needed. No cost. Just better inputs.

One startup in Austin reduced their LLM training budget by 87% by switching from a 34B model trained on 200B tokens to a 13B model trained on 600B tokens. Their inference latency dropped 40%. Their monthly cloud bill went from $48,000 to $6,200.

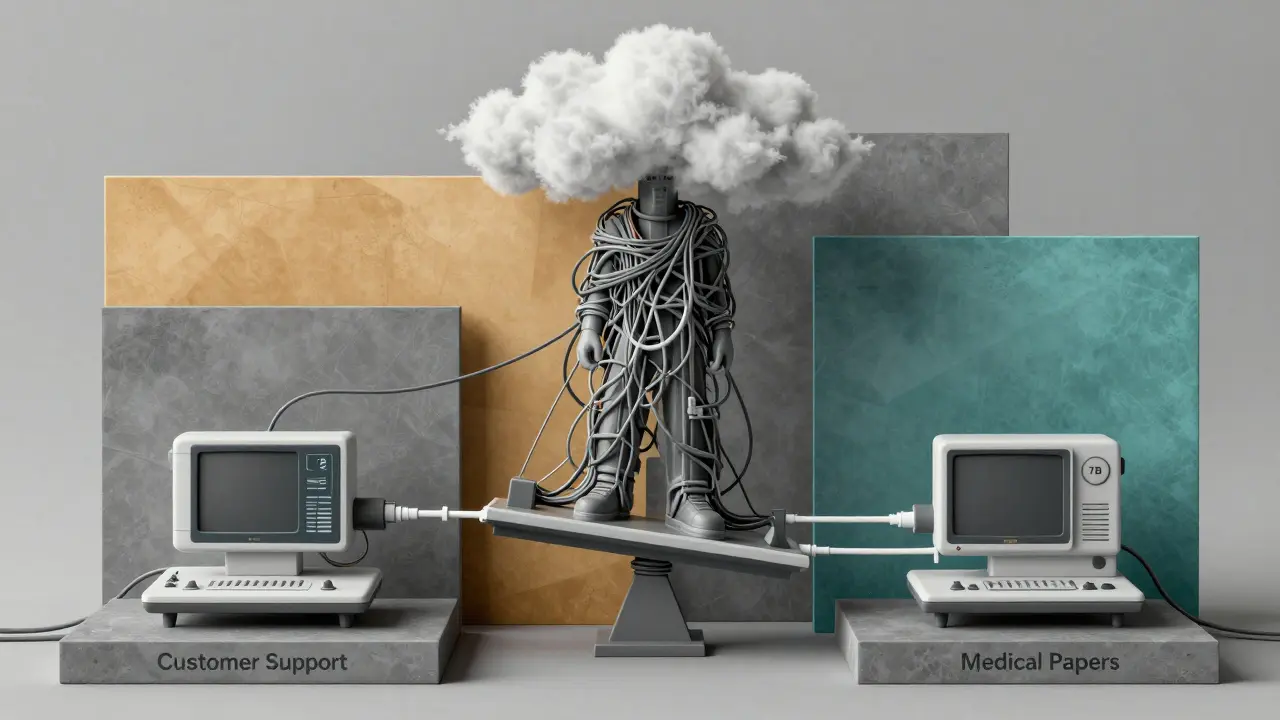

The Big Mistake: Training Only Once

Most teams treat training like a one-time event. They spend millions, then freeze the model. That’s a mistake. The real cost savings come from iterative training. Instead of training one massive model once, train three smaller models on different datasets. One on customer support logs. One on medical papers. One on legal contracts. Each costs $2 million. Total? $6 million. Now, deploy them separately. Each one outperforms a single 100B model trained on generic web text. And you can update them independently. No need to retrain everything. This approach is cheaper, more flexible, and more accurate. It’s also how companies like Anthropic and Mistral are winning. They don’t build one giant brain. They build many smart, focused ones.What to Do Right Now

If you’re thinking about training an LLM, here’s your checklist:- Don’t start with model size. Start with your compute budget. How much can you afford to spend total-training + inference for the next year?

- Use a pre-trained base model. LLaMA 3, Mistral 7B, or DeepSeek R1. No need to reinvent the wheel.

- Calculate your data needs: for every 1 billion parameters, train on at least 5 billion tokens. Preferably 10.

- Train with mixed precision and gradient checkpointing. Use DeepSpeed or FSDP.

- Quantize to 4-bit before deployment. Use tools like AWQ or GPTQ.

- Monitor inference costs. If your model is used over 1 million times/month, consider switching to a smaller one-even if it’s slightly less accurate.

- Test multiple small models. You might find that three 7B models beat one 70B model in real-world use.

The era of throwing money at bigger models is over. The future belongs to teams that think like engineers, not billionaires. Train smarter. Scale smarter. Serve smarter.

What’s the cheapest way to train an LLM today?

The cheapest way is to fine-tune a free, pre-trained model like LLaMA 3 or Mistral 7B. Training from scratch costs millions. Fine-tuning costs thousands. Use cloud GPUs on spot instances, train with mixed precision, and limit training to 10-20 billion tokens. You can get strong results on specialized tasks for under $5,000.

Is inference cost really that big of a deal?

Yes-if your model is used daily. A 70B model running 10,000 queries per day can cost $15,000/month in cloud compute. A 7B model doing the same job might cost $2,000. The difference isn’t just savings-it’s scalability. Many startups fail because they didn’t account for inference costs after training.

Can I train a model on a single GPU?

Not for anything beyond tiny models (under 1B parameters). But you can fine-tune a 7B model on a single A100 or H100 using quantization and offloading. Tools like Unsloth and QLoRA make this possible. You won’t get GPT-4 quality, but you’ll get a usable, domain-specific model for under $1,000 in cloud costs.

Why did Chinchilla outperform Gopher if it was smaller?

Because it was trained on four times more data. Gopher was a 280B model trained on 1.4T tokens. Chinchilla was 70B but trained on 1.4T tokens too-same compute, better data-to-parameter ratio. More data means the model learns patterns more deeply, not just memorizes more weights. It’s like giving a student 10 textbooks instead of 2-even if they’re not as tall.

Should I use open models or build my own?

Use open models unless you have unique data or regulatory needs. LLaMA 3, Mistral, and DeepSeek R1 are state-of-the-art and free. Training your own from scratch only makes sense if you have proprietary data (e.g., internal medical records, legal contracts) that no public model has seen. Otherwise, you’re wasting money.

How do I know if my model is overtrained or undertrained?

If your model’s performance plateaus after 100B tokens, you’re likely undertrained. If it’s over 200B tokens and still improving, you’re on the right track. But if your model is huge (50B+) and you trained it on less than 200B tokens, you’re probably undertrained. Use the rule: 10 tokens per parameter. For a 13B model, aim for 130B+ tokens.

Tia Muzdalifah

February 27, 2026 AT 01:33lol i just fine-tuned a mistral 7b on my cat’s meows and it started writing poetry about tuna. cost me $87 on spot instances. no cap. the real win? no one at my job even noticed i didn’t train a ‘real’ model. they just said ‘wow this chatbot gets me’ 😌

Zoe Hill

February 27, 2026 AT 05:43sooo… i tried training a 13b model on 600b tokens like the post said and my gpu cried. literally. the fan went full jet engine mode. but it WORKED. inference is 10x faster and my monthly bill dropped from $40k to $5k. also, i misspelled ‘quantize’ as ‘quanitize’ in my config file and it still trained? ai is wild. 🤯

Albert Navat

February 27, 2026 AT 19:01let’s cut through the noise: the entire ‘chinchilla’ paradigm is just a rebrand of ‘efficiency’ in a field obsessed with bloat. you’re not saving money-you’re optimizing for marginal gains in a hyper-competitive space. fp16 + fsdp + awq isn’t innovation-it’s baseline ops now. if you’re still using fp32 or not sharding, you’re not an engineer, you’re a hobbyist with a credit card. also, ‘prompt engineering replaces fine-tuning’? only if your use case is ‘ask the model to stop being dumb.’ real domain adaptation requires data. not vibes.

King Medoo

February 27, 2026 AT 22:28look, i get it. everyone wants to be the next mistral. but here’s the truth no one admits: we’re all just playing musical chairs with h100s. the ‘cheapest’ way to train an llm? don’t. just use claude 3.5 sonnet. it’s cheaper, faster, and doesn’t need your soul. training your own model is like building a jet engine in your garage because ‘you want to understand it.’ spoiler: you don’t. and your investors won’t care. also, i used 3 emojis in this comment. that’s my limit. 💔🚀🤖

Rae Blackburn

March 1, 2026 AT 14:48you think this is about cost? nah. this is about control. the big labs want you to think you need to train your own model so you’ll keep buying their cloud services. they’re the ones selling the h100s, the spot instances, the quantization tools. the ‘open models’? they’re bait. one day, llama 4 will come with a ‘pay-per-inference’ license. mark my words. i’ve seen the docs. they’re already drafting the TOS. they’re not saving you money-they’re trapping you. 🚨

LeVar Trotter

March 1, 2026 AT 21:08really appreciate this breakdown. one thing i’d add: iterative training isn’t just about cost-it’s about resilience. when you train three focused models instead of one monolith, you don’t have to retrain everything when one domain shifts. if your medical bot starts hallucinating on new guidelines, you update just that one. no downtime. no $2M retrain. just a weekend fine-tune. also, for teams new to this: use hugging face’s transformers + bitsandbytes. the 4-bit quantization is plug-and-play. and yes, you can absolutely run a 7b model on a single a100 with qlora. i’ve done it. it’s not glamorous, but it’s sustainable. we’re not building rockets-we’re building tools. keep it lean.