Choosing Your Governance Model

Not every company needs the same setup. Depending on your size and risk appetite, you'll likely lean toward one of three models. The first is the centralized model. Here, one single committee has the final say over everything. This is the gold standard for high-risk apps-about 92% of companies using this approach report fewer regulatory incidents. It's what IBM does with its AI Ethics Council. The trade-off? It takes about 30% more executive time to manage. Then there is the federated model, which is the sweet spot for large enterprises. You have a central oversight body for the big rules, but individual business units have their own subcommittees for the nitty-gritty details. JPMorgan Chase uses this to manage a massive volume of use cases without slowing down. Microsoft found this approach allowed them to deploy tools 44% faster while staying compliant. Finally, the decentralized model lets business units handle their own governance. While this is 68% more efficient for low-risk tools, it's dangerous for sensitive data. Data shows a 57% higher rate of compliance violations in decentralized setups. If you're handling customer PII or medical records, this isn't the way to go.| Model | Best For | Key Advantage | Main Drawback |

|---|---|---|---|

| Centralized | High-Risk/Regulated | Highest compliance safety | Heavier executive burden |

| Federated | Large Enterprises | Balanced speed and safety | Complex coordination |

| Decentralized | Low-Risk/Internal | Maximum agility | High risk of violations |

Who Needs a Seat at the Table?

If your committee is just IT people and lawyers, you're going to have a bad time. You need a mix of perspectives to avoid blind spots. Experts like Professor Fei-Fei Li have pointed out that lacking technical diversity leads to 73% more algorithmic bias incidents. To avoid this, your committee must include:- Legal: To keep you out of court and ensure regulatory oversight.

- Ethics and Compliance: To make sure the AI doesn't do something that ruins your brand reputation.

- Privacy: To manage how data is processed and stored.

- Information Security: To stop the AI from becoming a backdoor for hackers.

- Research and Development: To provide the actual technical expertise.

- Product Management: To ensure the tool actually solves a user problem.

- Executive Leadership: To align the AI strategy with the company's bottom line.

The RACI Framework for AI Decisions

Confusion over who decides what is the fastest way to kill a project. This is where a RACI Matrix is a responsibility assignment chart that clarifies who is Responsible, Accountable, Consulted, and Informed for every task comes in. Without this, you'll end up with a bureaucratic bottleneck where approvals take over 30 days. In a typical AI governance setup:- Accountable (A): The Committee Chair (usually a C-suite exec). Only one person can be accountable. If the project fails or violates a law, the buck stops here.

- Responsible (R): Legal and Technical leads. They do the heavy lifting-verifying compliance, testing the model, and writing the risk reports.

- Consulted (C): Privacy and Ethics experts. They provide input and warnings before a decision is made. They don't do the work, but their feedback is required.

- Informed (I): The Business Units. They need to know when the tool is approved so they can actually start using it.

Setting the Meeting Cadence

If you meet every week, you'll run out of things to say. If you meet every six months, you'll be too late to stop a disaster. A tiered approach is the only way to stay agile. The Strategic Tier (Quarterly): Your executive-level committee should meet every 90 days. This is for the big picture: reviewing overall policy, updating the charter, and looking at performance metrics across the company. This is where you decide if the EU AI Act (effective Feb 2025) requires a change in your general approach. The Operational Tier (Bi-Weekly): Working groups should meet every 14 days. These teams look at specific use cases. They ask: "Does this specific marketing bot have access to customer emails?"" They handle the risk tiering and technical reviews. The Fast Track (72-Hour Window): For urgent approvals, use electronic voting. You can't wait two weeks for a meeting to approve a critical patch or a time-sensitive tool. A 72-hour digital sign-off keeps the business moving.

Turning Governance into a Workflow

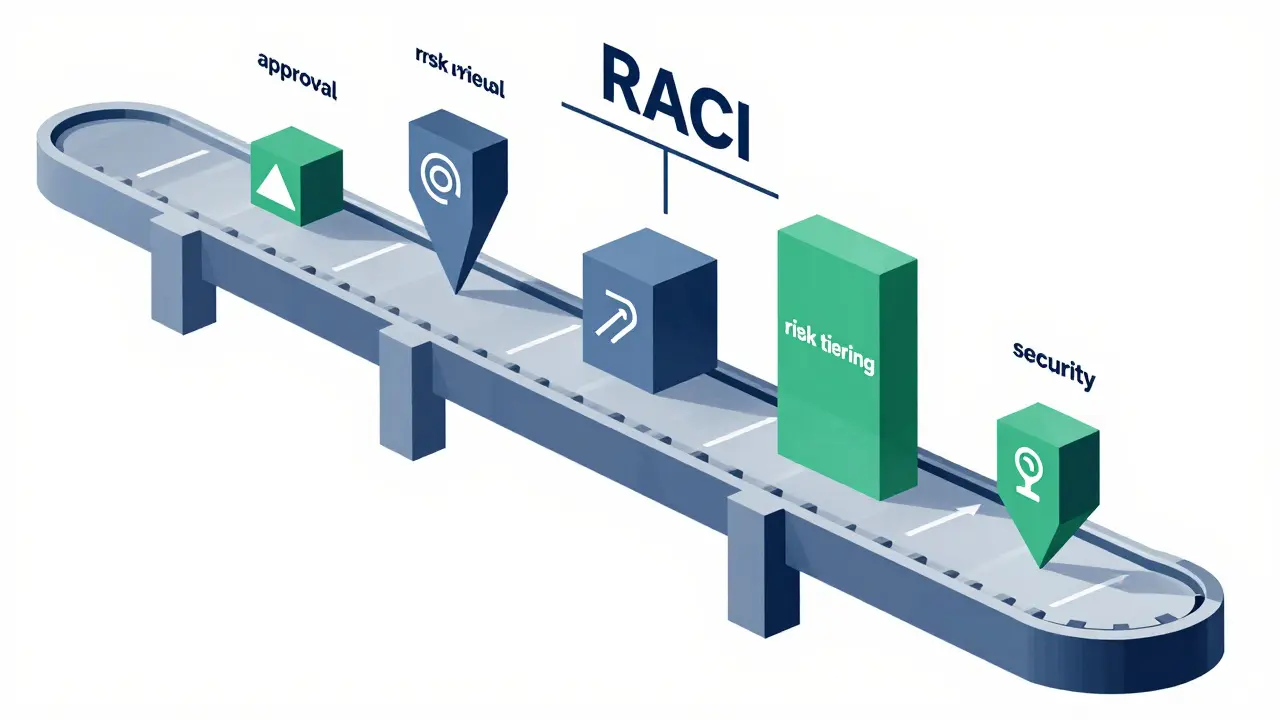

Governance shouldn't feel like a trip to the DMV. To avoid being a bottleneck, you need a standardized intake process. High-performing committees don't just "review" things; they follow a conveyor belt of steps:- Intake (2-5 days): The requester submits the tool's purpose and data sources.

- Risk Tiering (3-7 days): The tool is categorized as Low, Medium, or High risk. Low-risk tools (like internal brainstorming bots) might get a fast-pass.

- Privacy & Security Review (5-10 days): Checking for data leaks and vulnerabilities.

- Data Readiness (3-5 days): Ensuring the data used for fine-tuning is clean and legal.

- Final Approval (2-3 days): The Accountable person signs off.

Avoiding Common Pitfalls

One of the biggest mistakes is the "knowledge gap." There are stories of committees rejecting million-dollar tools because they didn't understand the difference between fine-tuning a model and prompt engineering. To prevent this, non-technical members need about 20-25 hours of specialized AI training, while technical members need 15-20 hours of regulatory training. Another trap is lacking "teeth." As Dr. Rumman Chowdhury from Accenture noted, a governance committee without veto power is just a suggestion box. For a committee to be effective, it must have the explicit authority to halt a deployment if it doesn't meet safety standards. Finally, don't let your policies gather digital dust. Leading organizations update their charters and acceptable use policies quarterly. With 27 new AI regulations appearing globally in early 2025 alone, a policy written six months ago is likely already obsolete.What is the most effective governance model for high-risk AI applications?

The centralized model is most effective for high-risk applications, with 92% of organizations using this approach reporting fewer regulatory incidents. It ensures a single, consistent set of standards is applied across the entire enterprise, though it requires more executive time to maintain.

How does a RACI matrix help in AI governance?

A RACI matrix prevents decision paralysis by clearly defining who is Responsible for the work, who is Accountable for the outcome, who must be Consulted for expertise, and who needs to be Informed of the result. This reduces approval delays and prevents overlapping responsibilities.

How often should an AI governance committee meet?

A tiered cadence is recommended: executive-level strategic committees should meet quarterly to review policy and performance, while operational working groups should meet bi-weekly to assess specific use cases and technical risks.

What happens if the committee lacks technical expertise?

Lack of technical expertise often leads to a significant increase in algorithmic bias incidents (up to 73%) and can result in the rejection of high-value tools due to a misunderstanding of how the technology works. It is critical to include engineers and R&D specialists on the committee.

How long does it take to set up a formal AI governance committee?

Typically, it takes 8-12 weeks of preparatory work, including stakeholder mapping, drafting the charter, and defining roles. Larger organizations with over 10,000 employees may require 30% more time to coordinate across departments.

Anand Pandit

April 29, 2026 AT 19:51This is a fantastic breakdown of a very complex topic. Most people forget that governance isn't about slowing things down, but about creating a safe path to move faster. I've seen so many teams struggle with the RACI part specifically, and having a clear 'Accountable' person really removes that friction from the process.

rahul shrimali

May 1, 2026 AT 13:47love the risk tiering part lets u move fast without breaking stuff!!

Reshma Jose

May 2, 2026 AT 12:50Totally agree on the federated model. It's the only way to keep the C-suite happy while letting the devs actually do their jobs without waiting for a monthly meeting to change a prompt.

Eka Prabha

May 3, 2026 AT 05:33The obsession with these "governance committees" is merely a facade for the systemic implementation of algorithmic panopticons. We are witnessing a curated orchestration of corporate hegemony where "ethics" is simply a semantic lubricant to ease the ingestion of our cognitive data. The utilization of a RACI matrix is a classic bureaucratic obfuscation technique designed to dilute actual liability while maintaining a rigid hierarchy of surveillance. It is truly an exercise in performative compliance while the underlying heuristic models continue to propagate socio-economic biases. One must wonder if these "committees" are actually just conduits for the globalist agenda to standardize human thought patterns under the guise of safety. The sheer audacity of pretending that a 72-hour digital sign-off constitutes "oversight" is laughable. We are essentially handing the keys to our digital autonomy to a handful of executives who likely cannot even define a neural network. This entire structure is a house of cards built on the shaky foundation of venture capital greed. I find the notion of "data readiness" to be particularly offensive given the predatory nature of data scraping. The intersection of corporate greed and AI governance is where true accountability goes to die. It is a sterile, corporate theater meant to pacify the masses. We are being lulled into a false sense of security by these tidy tables and bullet points. The reality is far more sinister and far less organized than a PDF suggests. Only those blinded by the promise of efficiency would embrace this as progress. It is a tragedy of modern intellectual surrender.

ujjwal fouzdar

May 4, 2026 AT 23:06Is this not the ultimate tragedy of the modern era? We build gods of silicon and then try to leash them with... a spreadsheet. A RACI matrix is but a fragile umbrella in a hurricane of existential dread. We dance around "risk tiering" while the very essence of human creativity is being distilled into tokens and probabilities. How poetic that we need a "Committee Chair" to decide if we are allowed to innovate. It's a masquerade of control in a world sliding toward an inevitable singularity where the "Accountable" party will be a ghost in the machine.

Bharat Patel

May 5, 2026 AT 10:27It's interesting to think about how these structures reflect our need for order in the face of the unknown. While the technical side is vital, the human element-the ethics and the philosophy of what we are creating-is where the real battle is fought.

Bhagyashri Zokarkar

May 6, 2026 AT 02:36i just cant believe ppl actually spend their hole day in these meetings like seriously who has the energy for bi-weekly reviews its just so draining and i feel like we are all just pretendin to care about the rules while actually just wanting to go home and nap lol its all just such a big mess of corporate buzzwords and honestly i dont think a matrix fixes the fact that people are just lazy and dont want to read the documentation anyway

Rakesh Dorwal

May 7, 2026 AT 03:35The centralized model is the only way to go if we want to protect our own national interests from foreign AI influence. These international regulations like the EU AI Act are just ways for other powers to dictate how we handle our tech. We need our own strong, centralized grip on this to ensure sovereignty.

Vishal Gaur

May 7, 2026 AT 12:55i mean its laaa a bit too optimistic to think a 25 day cycle is fast when the tech changes every two weeks but i guess its better than nothing... though i bet half these committees just end up being a waste of time becuse people dont actually follow the RACI guidelines once the project starts getting laate and they just skip the security review to meet a deadline anyway lol