Imagine you need to solve a complex physics problem. You wouldn't hire a general practitioner; you'd want a physicist. Now imagine if a single brain could instantly switch between a physicist, a historian, and a coder depending on the specific word it was processing. That is essentially how Mixture-of-Experts is an architectural design for neural networks that replaces a single large layer with multiple smaller, specialized subnetworks called experts. Instead of firing every single neuron for every single token, it only wakes up the parts of the model that actually matter for that specific piece of data.

For years, we scaled models by making them "dense," meaning every parameter worked during every single forward pass. But that approach hit a wall: as models grew, the cost to run them became astronomical. MoE breaks this link by decoupling total model capacity from the actual compute used per token. You can have a model with a trillion parameters but only use a fraction of them for any given task, giving you the intelligence of a giant model with the speed of a much smaller one.

How MoE Actually Works: The Gating Game

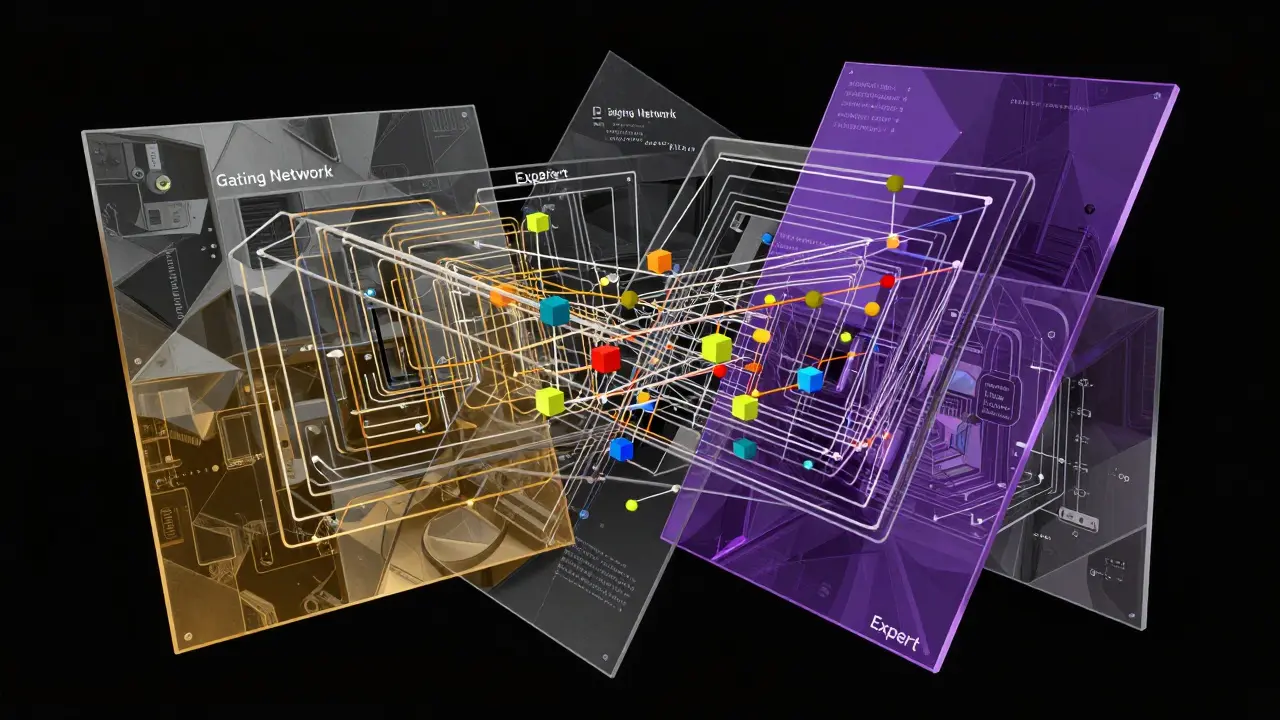

In a standard transformer, the feed-forward network (FFN) is a monolithic block. MoE swaps this block for a set of specialized experts and a Gating Network, which acts like a sophisticated switchboard. When a token enters the layer, the router decides which experts are best suited to handle it.

Take the Mixtral model as a real-world example. It converts every layer into an expert layer containing eight experts. However, it doesn't use all eight; it only activates two experts per token. This means while the model might have 47 billion total parameters, it only uses about 13 billion active parameters for any single forward pass. This sparse activation is the secret sauce that keeps latency low while maintaining high quality.

Modern implementations are getting even more aggressive. DeepSeek-v3 combined this sparsity with Multi-head Latent Attention (MLA). By using a low-rank joint projection to handle key and value vectors, they slashed the KV cache size by over 93% compared to a 67 billion parameter dense model. This makes the model not just computationally efficient, but far easier to fit into GPU memory during heavy workloads.

The Quality vs. Cost Tradeoff

The primary draw of MoE is the massive compute savings. Some empirical data shows that MoE models provide 4 to 16 times more compute savings at the same perplexity levels as dense models. For those in the training phase, the gains are even more stark; the Switch Transformer reported a 7-times speedup during pretraining. DeepSeek-v3 pushed this further with an FP8 mixed precision framework, bringing the estimated training cost down to roughly $5.6 million-a fraction of what a dense model of similar capacity would cost.

| Feature | Dense Models | MoE Models |

|---|---|---|

| Compute per Token | High (All parameters active) | Low (Only selected experts active) |

| VRAM Requirements | Proportional to active size | Proportional to total capacity |

| Training Stability | Generally Stable | Complex (Requires load balancing) |

| Inference Throughput | Linear scaling | Significantly higher at large batches |

However, the "free lunch" ends when you look at memory. While you only compute with a few experts, you still have to store all of them in your VRAM. If you have eight experts of 7 billion parameters each, you need enough memory for 56 billion parameters, even if you only use 13 billion per token. This creates a massive memory footprint that can make deployment tricky for smaller hardware setups.

Navigating the Engineering Hurdles

Training an MoE model isn't as simple as swapping a layer. You have to deal with the "expert collapse" problem, where the router keeps sending all tokens to the same two or three experts, leaving the others useless. Engineers must implement strict load-balancing losses to force the model to utilize all available experts equally.

There is also the issue of communication overhead. In a distributed setup, different experts usually live on different GPUs. If a token needs an expert on GPU 0 but was processed on GPU 7, the token has to travel across the network. This "all-to-all" communication can become a bottleneck that eats into the compute savings if the network isn't fast enough.

Fine-tuning also behaves differently. MoE models can sometimes show weaker sample efficiency during domain adaptation compared to dense models. Because the knowledge is spread across sparse experts, the model might require different hyperparameter settings or a more careful approach to avoid disrupting the learned routing patterns.

Cutting the Fat: Compression and Optimization

To solve the memory crisis, researchers are turning to expert compression. A notable advancement is Expert-Selection Aware Compression (EAC-MoE). Published in late 2025, this method uses quantization-aware router calibration to stop the model from losing accuracy when weights are compressed. By pruning experts that the router rarely uses, EAC-MoE can reduce memory usage by 4 to 5 times and boost throughput by up to 1.7 times, with a negligible accuracy loss of under 1.25%.

We are also seeing the rise of "cost-aware routing." Instead of just picking the best expert, the router considers the computational cost of the expert. This allows the model to balance the need for a high-quality answer against the reality of hardware constraints, essentially choosing a "good enough" expert to save time and energy.

Is MoE Right for Your Project?

If you are a massive organization with a cluster of H100s and you need a model that handles a hundred different tasks-from coding Python to writing poetry-MoE is the gold standard. The ability to scale to trillions of parameters without needing a nuclear power plant for inference is too good to pass up. Production systems like Grok have already proven that this architecture is viable at scale.

For smaller teams, the barrier is the infrastructure. If you can't handle the VRAM requirements or the complexity of distributed training, a smaller, highly-distilled dense model might actually be more efficient. The routing overhead in very small MoE models can even outweigh the benefits, making them slower than their dense counterparts.

Does MoE make a model "smarter" than a dense model?

Not necessarily "smarter" in terms of raw reasoning, but it allows the model to have a much larger "knowledge base" (total parameters) without slowing down. This generally leads to better performance on diverse tasks because the model can dedicate specific experts to different domains.

Why does MoE require more VRAM if it uses fewer parameters per token?

Because the GPU must have the weights for all possible experts loaded in memory to be ready for the router's decision. Even if you only use 10% of the experts for one token, the other 90% must still reside in memory to be available for the next token.

What is the main risk of using a Mixture-of-Experts architecture?

The biggest risks are training instability and expert collapse. If the gating network isn't tuned correctly, the model may rely on a tiny fraction of its experts, effectively becoming a smaller dense model and wasting the majority of its parameter capacity.

How does MoE affect inference latency?

It generally reduces latency for a given level of model quality. Since fewer FLOPs (floating point operations) are required per token, the model can generate text faster than a dense model with the same total parameter count.

Can MoE be used for multimodal models?

Yes, MoE is increasingly used in multimodal and multitask learning. Different experts can specialize in different modalities (like images vs. text) or different types of tasks, allowing one model to handle a vast array of inputs efficiently.