Imagine a machine that doesn't just read a description of a dog or look at a photo of one, but understands that the bark it hears, the video of the tail wagging, and the word "canine" all point to the same concept. For years, AI treated these as separate silos. We had one model for text and another for images. But the real magic happens when we stop treating these as different languages and start translating them into a single, shared mathematical space. This is the core of multimodal transformers, a technology that is fundamentally changing how machines perceive the world.

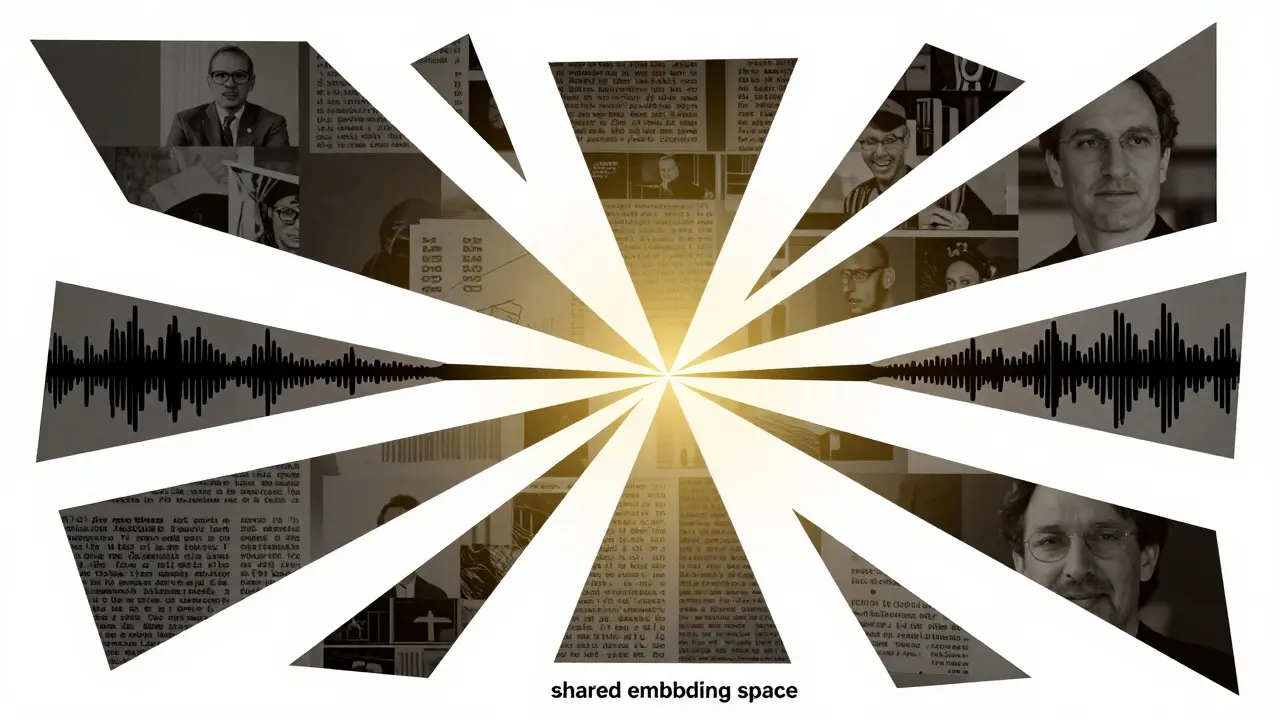

At its heart, the goal is alignment. We want to take a piece of text, a pixel-heavy image, a wavy audio file, and a temporal video clip and map them to a shared semantic embedding space. When this works, the "distance" between the embedding of the word "sunset" and an actual image of a sunset becomes nearly zero. This allows for things like cross-modal search, where you can find a specific moment in a thousand-hour video library just by typing a natural language query.

The Blueprint of Multimodal Architectures

To make this work, engineers don't just throw all the data into one pot. They use a structured pipeline that begins with modality-specific encoders. Think of these as specialized translators that turn raw data into a format the transformer can actually understand.

For text, the system typically uses Byte Pair Encoding (BPE) is a subword tokenization method that breaks text into manageable chunks , often resulting in embeddings with 768 to 1024 dimensions. Images are handled differently. Instead of looking at pixels, the Vision Transformer (ViT) is an architecture that splits images into 16x16 pixel patches , converting each patch into a 768-dimensional vector.

Audio is perhaps the trickiest. Raw audio is too noisy, so it's converted into spectrograms or Mel-frequency cepstral coefficients (MFCCs). The Audio Spectrogram Transformer (AST) is a model that processes these visual representations of sound to generate embeddings at 100ms intervals. Video adds another layer of pain: time. To handle this, models use tubelet embedding, which captures 16-frame segments to maintain both spatial and temporal context.

| Modality | Primary Encoder | Input Format | Typical Embedding Dim | Key Challenge |

|---|---|---|---|---|

| Text | DistilBERT / BERT | BPE Tokens | 768 - 1024 | Semantic nuance |

| Image | ViT-Base | 16x16 Patches | 768 | Spatial invariance |

| Audio | ASST / SSAST | Mel Spectrograms | 768 | Temporal noise |

| Video | VivIT / VATT | Tubelets | 768+ | Temporal alignment |

Solving the Alignment Problem

Having four different encoders is great, but if the text embedding is in one "neighborhood" and the image embedding is in another, the model can't connect them. This is where contrastive learning comes in. The most effective approach today involves using a triplet loss function. Essentially, the model is shown a "positive" pair (a photo of a cat and the word "cat") and a "negative" pair (a photo of a cat and the word "car").

The model's job is to pull the positive pair closer together in the embedding space while pushing the negative pair apart. For example, the VATT is a Video-Audio-Text Transformer designed for joint representation learning . By using a margin of 0.2 in its loss function, VATT creates a tight cluster of related concepts. The results are concrete: properly aligned embeddings can reach a 78.3% recall@10 on the MSR-VTT video-text retrieval task, which blows unimodal baselines (62.1%) out of the water.

There are two main ways to structure these transformers. The two-stream approach, used by models like ViLBERT, keeps separate transformers for each modality and lets them "talk" via cross-attention. It's accurate but heavy, often requiring 23% more parameters. The single-stream approach, like VATT, feeds everything into one shared backbone. This is leaner-reducing parameters by about 18%-and usually just as effective for most tasks.

The Reality of Implementation: Expectations vs. Code

If you're a developer looking at this, be warned: the gap between a research paper and a working pipeline is wide. Community feedback on GitHub and Reddit suggests that multimodal pipelines are roughly 3.2 times more complex to write than unimodal ones. The biggest headache? Synchronization. Getting an audio clip to align perfectly with a specific frame in a video is a recurring nightmare for almost 80% of developers in these circles.

Hardware requirements are also steep. You can't train these on a laptop. To hit state-of-the-art performance, you typically need at least 8 NVIDIA A100 GPUs with 80GB of VRAM each. However, the good news is that inference is much lighter. Once the model is trained, a single A100 can handle real-time processing of 224x224 video at 30fps.

Pro tip: don't start from scratch. Transfer learning is a superpower here. Some researchers have reported that fine-tuning a pre-trained VATT model on a niche dataset (like medical videos) required only 1,200 examples to reach 85% accuracy. Compare that to 15,000 examples needed for a traditional CNN baseline, and the efficiency becomes obvious.

Emergent Capabilities and the "Understanding" Debate

One of the most fascinating parts of this tech is "emergent capabilities." Dr. Fei-Fei Li has pointed out that adding a modality can improve performance even on tasks that don't require it. For instance, visual question answering (VQA) scores can jump by 15% simply by adding audio context, even if the question is only about the image. It's as if the audio provides a "hint" that helps the model anchor its visual understanding.

However, not everyone is convinced we've reached "intelligence." Meta's Chief AI Scientist, Yann LeCun, argues that most of these systems are just "glorified alignment engines." He points out a 43.7% failure rate on the Multimodal Reasoning Benchmark. The critique is simple: the model can match a picture of a dog to the word "dog," but it can't actually reason about why a dog is behaving a certain way in a new environment. It's pattern matching, not thinking.

The Market Shift: From Lab to Enterprise

Despite the academic wins, commercial adoption is moving slowly. While 78% of Fortune 500 companies have played around with multimodal pilots, only about 22% have actually deployed them at scale. The biggest winners are in video analytics and medical imaging. However, the regulatory landscape is a major speed bump. GDPR compliance can add up to 22% to the cost of implementation, especially when dealing with sensitive audio and video data.

We are now seeing a move toward "foundation model surgery," where developers adapt pre-trained text models for vision or audio using 90% less data. This could lower the barrier to entry for smaller companies who can't afford a cluster of A100s. We're also seeing the rise of "modality dropout"-a training technique introduced in VATT-v2 that makes models more robust when one input (like audio) is missing, improving robustness by nearly 23%.

What is the main difference between single-stream and two-stream transformers?

Two-stream transformers use separate processing paths for each modality and connect them using cross-attention mechanisms. This is often more accurate for complex VQA tasks but requires more parameters. Single-stream transformers feed all modality embeddings into one shared backbone, which is more computationally efficient and uses about 18% fewer parameters while maintaining similar performance.

Why is audio the hardest modality to align?

Audio is inherently noisier and more variable than text or images. While text has clear tokens and images have spatial structures, audio requires conversion to spectrograms which still struggle with word error rates. For example, spectrogram-based approaches often see significantly higher error rates on benchmarks like LibriSpeech compared to the near-perfect accuracy of text-only systems.

How much data is needed to fine-tune a multimodal model?

Thanks to transfer learning, you need far less data than you would for a model trained from scratch. In some medical video use cases, researchers have reached 85% accuracy with as few as 1,200 examples when starting from a pre-trained VATT model, whereas a CNN baseline would have required 15,000 examples.

What is contrastive learning and why is it used for alignment?

Contrastive learning is a technique that teaches a model to recognize similar and dissimilar pairs of data. By using a triplet loss function, the model is forced to minimize the distance between a matching text-image pair (positive) and maximize the distance between a mismatch (negative). This creates the shared embedding space necessary for cross-modal retrieval.

What are the hardware requirements for training these models?

Training state-of-the-art multimodal transformers is resource-intensive. A standard setup typically requires at least 8 NVIDIA A100 GPUs (each with 80GB VRAM) and 512GB of system RAM. However, for inference (running the model), a single A100 is usually sufficient to process 224x224 video at 30fps in real-time.

Next Steps and Troubleshooting

If you're starting a multimodal project, begin by selecting your encoders based on latency needs. If you need speed, DistilBERT for text and ViT-Base for images are the gold standard. If your audio-video sync is off, check your sampling rates; ensuring audio is at 16kHz and images are exactly 224x224 is the first step to solving most alignment drift.

For those facing "alignment fatigue"-where the model just isn't converging-experiment with your temperature parameters. The VATT research suggests an optimal range between 0.05 and 0.15 for InfoNCE loss. If your loss is oscillating, try a learning rate between 1e-4 and 5e-5, as this range is linked to 87% of successful implementations on GitHub.