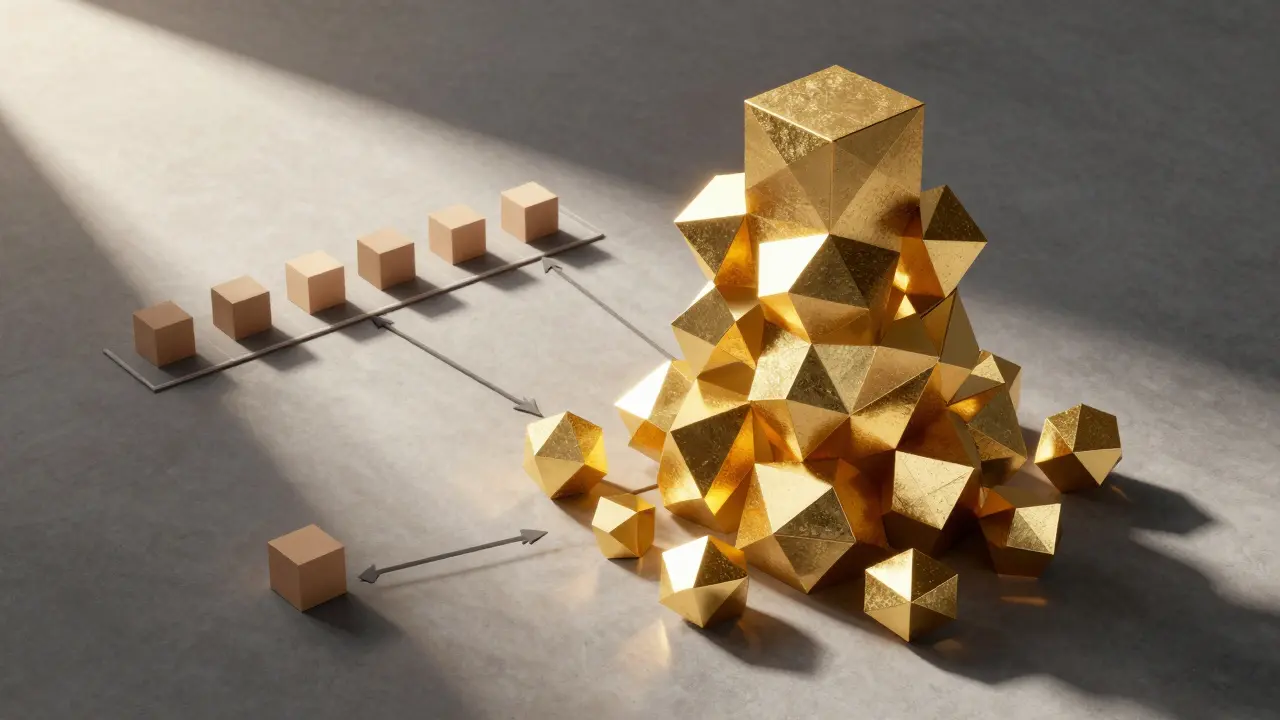

Many companies start their AI journey by plugging into a polished API, but as the token count climbs, the monthly bill starts to look like a mortgage payment. The temptation is obvious: rent a few GPUs, deploy an open-source model, and stop paying the "token tax." However, switching to self-hosted LLMs is not as simple as swapping a URL. If you only look at the price per token, you're ignoring the invisible costs that can make self-hosting a financial disaster for small-to-mid-sized teams.

The Token Trap: Why Per-Token Pricing is Deceptive

When you use an API from a provider like OpenAI or Anthropic, the pricing is straightforward. You pay for what you use. On the surface, self-hosting looks like a steal. For instance, running a 7B parameter model on an H100 spot instance costs roughly $1.65 per hour. At 70% utilization, that's about $10,000 a year for a machine that can pump out tokens at a fraction of the cost of a premium API.

But here is the catch: the GPU is the cheapest part of the equation. The real expense is the "human middleware." To get a model from a Hugging Face repository to a production-ready endpoint, you need engineers. You need someone to manage vLLM or Ollama, monitor for memory leaks, and handle the inevitable crashes. When you add engineering salaries, MLOps overhead, and the cost of idle hardware during low-traffic hours, your Total Cost of Ownership (TCO) often jumps 3 to 5 times higher than the raw GPU rental price.

Finding Your Break-Even Point

So, when does the math actually flip in favor of hosting your own? It all comes down to your monthly volume. If you are processing fewer than 2 million tokens a day (roughly 60 million a month), stick with the API. The operational overhead of maintaining a server will almost always outweigh any token savings at this scale.

Once you hit the 500 million to 5 billion token range, you enter the "gray zone." Pure self-hosting is still risky due to engineering costs, but using brokered GPU services or managed platforms like Hugging Face or DeepSeek AI can save you 40-50% compared to the big cloud hyperscalers.

The real victory happens at 10 billion tokens per month. At this massive scale, the amortization of infrastructure is so efficient that your per-token cost drops to $0.001-$0.002. For a company moving this much data, the shift can be staggering. One fintech firm reportedly slashed its monthly AI spend from $47,000 down to $8,000 by moving to a hybrid self-hosted setup. They didn't just save money; they gained total control over their data latency and privacy.

| Volume Range | Cheapest Option | Primary Driver | Risk Level |

|---|---|---|---|

| < 60 Million | Standard API | Low overhead, zero maintenance | Low |

| 60M - 500 Million | Tiered API / Small Self-Host | Balance of cost vs. engineering | Medium |

| 500M - 5 Billion | Managed GPU Platforms | Infrastructure efficiency | Medium |

| 10 Billion+ | Full Self-Hosting | Massive amortization of GPU cost | High (Ops heavy) |

The Hidden Costs of "Free" Open Source

It is easy to get blinded by the phrase "open source," but in production, nothing is free. Beyond the GPU, you have to account for these six economic drains:

- Engineering Labor: The time spent setting up Docker containers and optimizing weights.

- MLOps Infrastructure: Monitoring tools and logging that ensure the model isn't hallucinating or crashing.

- Hardware Decay: If you buy your own chips, you face depreciation and replacement cycles.

- Incident Response: The cost of an engineer waking up at 3 AM because a GPU kernel panicked.

- Opportunity Cost: Every hour your best dev spends fixing a server is an hour they aren't building new product features.

- Idle Capacity: If your model is 10% utilized at night, you are paying for 90% wasted electricity and compute.

The Hybrid Strategy: The Smart Way to Scale

You don't have to choose one or the other. The most mature organizations use a routing strategy. They don't send a simple "summarize this email" task to a massive, expensive frontier model. Instead, they route commodity tasks-like classification, basic extraction, or FAQ responses-to a small, self-hosted 7B or 13B parameter model.

They reserve the "big guns" (like GPT-4 or Claude Opus) only for complex reasoning, high-stakes coding, or nuanced creative work. This hybrid approach typically cuts costs by 40-70% without a noticeable drop in quality because most AI tasks don't actually require a trillion-parameter brain.

Measuring Success: Outcomes over Tokens

One of the biggest mistakes in cost modeling is optimizing for the lowest price per token. This is a vanity metric. If you deploy a cheap self-hosted model that fails 50% of the time, it is actually more expensive than a premium API that works the first time. Why? Because you're paying for the compute to run the model twice, plus the engineering time to debug the failure.

Switch your focus to cost-per-successful-outcome. A 70B parameter model might cost ten times more to run than a 7B model, but if it completes a complex task in one shot while the 7B model requires five failed attempts and a human correction, the 70B model is actually the cheaper choice.

Decision Framework: Should You Self-Host?

Stop guessing and ask these five questions. If you answer "Yes" to three or more, it's time to look at self-hosting.

- Is our volume over 10 billion tokens monthly? (The financial tipping point).

- Is our data so sensitive that it cannot leave our VPC? (The compliance mandate).

- Do we need deep fine-tuning that APIs don't allow? (The customization requirement).

- Do we already have a dedicated MLOps/DevOps team? (The capacity check).

- Can an open-source model reach 95% of the required accuracy? (The quality check).

Is self-hosting always cheaper if I have the GPUs?

No. Having the hardware is only one part. You still have to pay for the engineers to maintain the stack and the electricity to run it. For low-volume users, the engineering salary alone makes self-hosting far more expensive than a simple API subscription.

Which models are best for cost-effective self-hosting?

Smaller models below 14B parameters are the gold standard for cost-efficiency, especially for simple tasks. Mid-sized models like Gemma 27B or Qwen 30B provide a great balance of reasoning power and cost, often beating out mid-tier APIs on a price-to-performance basis.

What is a 'brokered GPU service'?

These are platforms like SiliconFlow or Firework AI that host open-source models for you. They provide the cost benefits of open-source models without requiring you to manage the actual hardware, acting as a middle ground between full self-hosting and big-tech APIs.

How does data privacy affect the cost model?

Privacy isn't just a legal requirement; it's a cost driver. For healthcare or finance, the cost of a data breach using a public API far outweighs any savings. In these cases, self-hosting is an insurance policy that pays for itself by eliminating the risk of third-party data exposure.

Can I use a hybrid approach with different providers?

Yes, and you should. By routing simple tasks to a self-hosted 7B model and complex tasks to GPT-4 or Claude, you can capture 40-70% savings. This prevents you from overpaying for simple tasks while ensuring high quality for difficult ones.

Next Steps and Troubleshooting

For the Prototyper: Don't build a server yet. Use APIs to find your exact token usage patterns. Once you have three months of stable data, run a TCO analysis to see if the break-even point has been hit.

For the Scale-Up: If your API bills are skyrocketing but you lack a DevOps team, look into managed open-source providers. This gives you the per-token efficiency of open source without the headache of server management.

For the Enterprise: Audit your tasks. You'll likely find that 80% of your API calls are for tasks a small 7B model could handle. Start a pilot program routing just those 80% to a self-hosted instance to prove the ROI before migrating everything.

Kendall Storey

April 21, 2026 AT 10:05The hybrid routing strategy is absolute fire. Most teams are just brute-forcing everything through a frontier model and bleeding cash while their latency spikes. If you aren't using a small model for the low-hanging fruit and only hitting the heavy hitters for the complex reasoning, you're basically just lighting money on fire. The TCO on a fine-tuned 7B for specific classification is a complete game changer for the bottom line. Keep pushing this approach because a lot of devs are still stuck in the "one model to rule them all" mindset which is totally inefficient for production workloads

Kevin Hagerty

April 23, 2026 AT 03:19wow such a deep analisis... imagine thinkin a few h100s fix everythin lol. probablly just wrote this to sell some shitty mlopps course. a lot of fluff here

Janiss McCamish

April 23, 2026 AT 14:37The cost-per-successful-outcome metric is the only way to actually measure ROI. Using a cheap model that fails five times is just a waste of compute and human time. Focus on the outcome, not the token price.

Yashwanth Gouravajjula

April 25, 2026 AT 03:31Spot on. Hardware is cheap, talent is expensive.

Richard H

April 25, 2026 AT 05:37We need to be bringing these server farms back to American soil. Relying on these fragmented brokered services or foreign-influenced open source models is a massive security risk for our infrastructure. If we're talking about 10 billion tokens, that's critical national infrastructure. We should be investing in our own domestic silicon and hosting it in our own backyards where we can actually control the security and the power grid without relying on some middleman in another hemisphere. It is a matter of digital sovereignty and economic dominance. Why are we even discussing

Meredith Howard

April 25, 2026 AT 20:08The perspective on data privacy being an insurance policy is quite an interesting way to view the financial model

It is often overlooked that the peace of mind provided by a VPC environment cannot be easily quantified in a simple spreadsheet but remains a primary driver for the healthcare sector

The mention of human middleware is a very necessary addition to the conversation as many people forget the salary cost of a full time engineer who must maintain the uptime of these systems

It seems reasonable to suggest that the break even point varies significantly based on the existing team's skill set and current infrastructure

The hybrid approach allows for a very gentle transition for companies that are not yet ready for full self hosting

One might consider that the environmental cost of running idle GPUs is another factor to include in the TCO analysis

It is helpful to have a clear decision framework to avoid impulsive infrastructure changes

The distinction between vanilla open source and production ready deployments is often blurred in online discussions

Most organizations likely fall into that gray zone where managed platforms provide the best of both worlds

The emphasis on outcomes over tokens shifts the focus back to business value rather than technical vanity

It is interesting how the cost of a single successful outcome can be drastically lower if the model is appropriately sized for the task

The suggestion to use APIs for prototyping first is a very practical piece of advice for any startup

Many teams struggle with the transition from a prototype to a scaled production environment

The 95 percent accuracy threshold is a challenging but fair benchmark for switching to open source

Overall this provides a very comprehensive view of the current AI economic landscape