Have you ever wondered how a language model knows what word comes next? It’s not guessing. It’s not reading your mind. It’s doing math - quietly, fast, and with surprising precision. Every time you ask a chatbot a question or get a suggested reply, the model is calculating a token probability distribution - a list of possible next words, each with a score telling you how likely it is to appear. This isn’t magic. It’s the core of how all modern language models work.

How the Model Sees Text: Tokens, Not Words

Before a model can predict the next word, it has to break your input into pieces called tokens. A token isn’t always a full word. "Running" might become one token. "Unhappiness" could be split into "un" and "happiness." Even punctuation like "!" or "." gets its own token. This system, called tokenization, lets models handle rare words, typos, and even emojis without needing a dictionary of every possible word in every language.

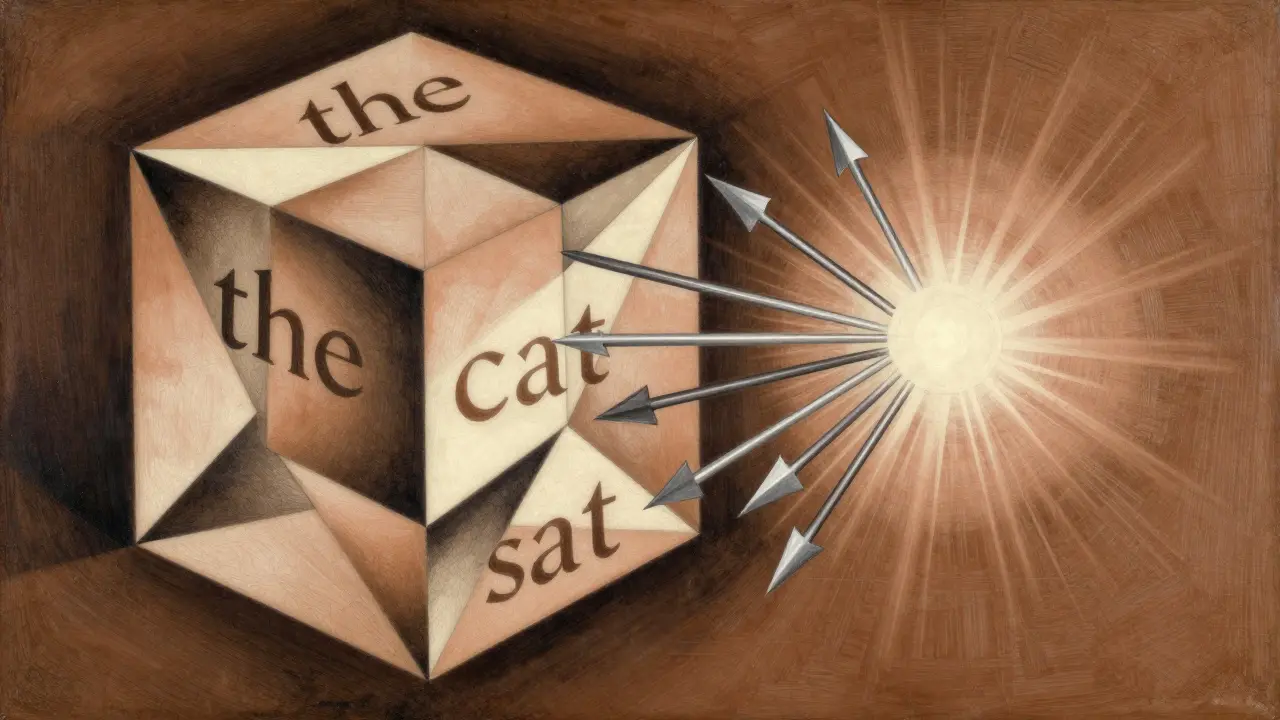

Once your text is turned into tokens, the model looks at all of them together - not just the last one. That’s because context matters. If you type "The cat sat on the," the model doesn’t just think about "the" - it remembers "cat," "sat," and "on." It uses this full history to build a mental picture of what’s happening. This is done through layers of neural networks, especially transformers, which let each token talk to every other token in the input. The result? A rich, context-aware representation of everything that came before.

The Math Behind the Prediction: Logits and Softmax

After processing the context, the model spits out a list of raw scores - one for every possible token in its vocabulary. These are called logits. Think of them like ungraded test scores: high means "likely," low means "unlikely," but they’re not probabilities yet.

To turn logits into real probabilities, the model applies a mathematical function called softmax. Softmax takes all those raw scores and reshapes them so they add up to exactly 1.0. That turns them into a valid probability distribution. For example, if the model is deciding between "blue," "purple," and "red" after "violets are," softmax might give you:

- "blue": 0.9985 (99.85% chance)

- "purple": 0.0009 (0.09% chance)

- "red": 0.0004 (0.04% chance)

- Every other color: less than 0.0001%

That’s not random. That’s the model’s trained understanding of language patterns. It’s seen millions of texts where "violets are blue" appears far more often than "violets are purple." So it assigns a near-certainty to "blue."

Choosing the Next Token: More Than Just the Most Likely

Now that we have probabilities, how do we pick the next token? The simplest way is greedy sampling: always pick the token with the highest probability. It’s fast. It’s predictable. And it’s boring. You’ll get the same output every time. It sounds robotic because it is.

Real applications need variation. So instead of always picking the top choice, we sample randomly - but not evenly. We use the probabilities as weights. In our example, "blue" has a 99.85% chance of being picked, so it’ll win almost every time. But once in a while, "purple" gets picked too. That’s multinomial sampling. It’s stochastic. It’s creative. But it has a problem: when the vocabulary is huge (over 100,000 tokens), you’re still giving tiny chances to nonsense words like "qzxy" or "fjkl."

Top-k and Top-p Sampling: Smarter Filters

To fix that, we use smarter filters. Top-k sampling cuts the list down to the top k most likely tokens. Say k=10. The model ignores all other 99,990 tokens and only considers the 10 with the highest probabilities. Now you’re not wasting time on gibberish. You’re narrowing the field to plausible options. But choosing k is tricky. Too low, and you lose creativity. Too high, and you let in noise again.

That’s where top-p sampling (or nucleus sampling) shines. Instead of fixing the number of tokens, you fix the probability threshold. Say p=0.9. The model adds tokens one by one, from highest to lowest probability, until their combined probability hits 90%. Then it stops. It doesn’t care how many tokens that is - 3? 20? It doesn’t matter. If the model is super confident (like with "blue"), it might only pick 2 or 3 tokens. If it’s unsure, it might pick 50. It adapts. This method keeps the output creative but grounded. No random nonsense. No repetitive patterns. Just smart, natural variation.

Temperature: The Creativity Dial

There’s another knob you can turn: temperature. It’s applied before softmax. A temperature of 1.0 leaves the logits unchanged. A temperature below 1.0 (say, 0.3) makes high-probability tokens even more likely. The distribution gets sharper - like a laser beam. Output becomes predictable, focused, almost mechanical. Perfect for technical writing or code generation.

Go above 1.0 (like 1.5 or 2.0), and the distribution flattens. Low-probability tokens get a boost. The model starts taking risks. "Violets are purple" suddenly has a fighting chance. This is great for poetry, storytelling, or brainstorming. But go too high - say 3.0 - and you get nonsense. The model forgets what it learned. It’s like turning up the static on a radio.

Why This Matters: Understanding Model Behavior

Knowing how probability distributions work isn’t just for engineers. It’s the key to trusting AI. If you see that a model gives "blue" a 99.85% chance after "violets are," you know it’s not hallucinating. It’s following patterns from its training data. But if it suddenly picks "green" with 60% probability, something’s off. Maybe the training data was biased. Maybe the prompt was misphrased. You can dig into the logits, check the top-k tokens, and trace the decision.

Developers use this to debug. If a model keeps generating offensive content, you can check: Is it because the probability distribution favors harmful phrases? Can you tweak temperature or top-p to avoid it? Can you filter out certain tokens before sampling? This level of control is what separates tools from toys.

What Makes a Distribution Hard to Predict?

Not all probability distributions are created equal. Research shows that models are really good at reproducing distributions that are either very certain (one token dominates) or very random (all tokens have similar probabilities). But they struggle with mid-range entropy - where a few tokens are plausible, but none stand out. Think of it like choosing between three good restaurants. The model doesn’t know which one you’ll pick. It’s harder to guess.

Even more surprising: models are better at copying distributions they’ve seen before - even if those distributions came from a completely different model. That means language models aren’t just memorizing phrases. They’re learning patterns of likelihood. They’ve internalized how humans assign probabilities to words. That’s why they feel so human.

Real-World Use: From Chatbots to Code

When you’re chatting with an AI assistant, top-p sampling at p=0.9 and temperature=0.8 is probably what you’re getting. It balances creativity and coherence. When you’re asking for code, temperature might drop to 0.2. You don’t want the AI to invent new syntax. You want it to be precise.

Some systems even use a second model to explain why the first model chose a certain token. The second model reads the probability distribution and writes a natural-language explanation: "The model chose 'blue' because it's the most common color paired with 'violets' in training data." This adds transparency. It turns a black box into something you can understand.

Final Thought: It’s All About Control

Token probability distributions are the hidden engine behind every AI-generated sentence. They’re not just math. They’re the bridge between human language and machine intelligence. By understanding how they work - how softmax, top-k, top-p, and temperature shape them - you’re not just learning how AI thinks. You’re learning how to guide it. And that’s the real power.

What is the difference between greedy sampling and sampling from the probability distribution?

Greedy sampling always picks the token with the highest probability - it’s predictable and fast, but often repetitive and robotic. Sampling from the probability distribution means choosing randomly, weighted by those probabilities. That introduces variation - sometimes you get the most likely word, sometimes a less likely one. It feels more human, but without controls, it can also produce nonsense.

Why does temperature affect how creative a model sounds?

Temperature changes the shape of the probability distribution before softmax. A low temperature (like 0.3) makes high-probability tokens even more dominant - the model becomes conservative. A high temperature (like 1.5) flattens the distribution, giving more weight to unlikely tokens. That lets the model explore less common word choices, making its output feel more imaginative - or sometimes, weirder.

What’s the advantage of top-p sampling over top-k?

Top-k uses a fixed number of tokens - say, the top 50. But if the model is unsure, the 50th token might still be very likely. Top-p adapts: it picks the smallest group of tokens whose total probability reaches a threshold (like 90%). That means when the model is confident, it picks fewer tokens. When it’s unsure, it picks more. It’s more flexible and avoids arbitrary limits.

Can I see the actual probability distribution a model uses?

Yes. Most AI libraries let you extract the logits from model outputs. You can apply softmax yourself and see the full probability distribution across the entire vocabulary. Tools like Hugging Face’s Transformers library make this easy. You can then check which tokens had the highest scores and why the model chose one over another.

Do all language models use the same method for next-word prediction?

They all use logits and softmax - that’s the standard. But how they sample from the distribution varies. Some use greedy sampling. Others use top-p or top-k. Some even combine multiple methods. The core math is the same, but the sampling strategy depends on the use case: creativity, speed, or control.

Chris Atkins

March 5, 2026 AT 01:37Used to think it was reading my mind but nah it's just seen a trillion sentences and learned patterns

Like how you know 'bread and butter' sounds right but 'bread and hammer' doesn't

Same deal just way faster

Jen Becker

March 5, 2026 AT 11:50Ryan Toporowski

March 6, 2026 AT 08:22Never thought about how 'top-p' adapts like that

Kinda reminds me of how humans pick words too

Like when you're unsure you say more options

When you're sure you just say one

AI's doing the same thing

Love it

Samuel Bennett

March 7, 2026 AT 19:38It's just exponentiation and normalization

Any undergrad with a calculator could do this

And don't get me started on 'tokens'

Why not just use words? Because the model's trained on garbage data from 4chan and Reddit

That's why 'qzxy' even shows up

It's not intelligence it's statistical noise

Rob D

March 9, 2026 AT 08:15They say it's based on training data but what if the training data was rigged?

What if Big Tech fed it only pro-establishment phrases?

That's why it always says 'blue' after 'violets are'

Because they don't want you thinking about green violets

It's not math it's propaganda

And temperature? That's the dial they use to control how rebellious the AI gets

Keep it low so it never questions authority

They're not building intelligence they're building obedience

Franklin Hooper

March 10, 2026 AT 19:08Technically a token is a lexical unit not a fragment of a word

And 'logits' are not 'raw scores' - they're unnormalized log-odds

There's a difference

And 'top-p' isn't 'smarter' - it's just a heuristic with no theoretical grounding

Most of this reads like a blog post written by someone who watched three YouTube videos

It's not wrong per se - just imprecise

And that's worse than wrong

Jess Ciro

March 11, 2026 AT 14:55It's about control

They call it 'probability' but what they're really doing is encoding bias into every decimal point

Every time the model picks 'blue' over 'purple' it's not learning - it's obeying

And when you change temperature? You're not adjusting creativity - you're adjusting obedience

This isn't AI

This is a digital puppet master

saravana kumar

March 11, 2026 AT 19:38Tamil selvan

March 12, 2026 AT 21:15Mark Brantner

March 14, 2026 AT 00:16then why does my ai always say 'as an ai' like 3x in every reply?

is it just confused?

or is it secretly trying to tell me something?

also i typed 'the sky is' and it said 'orange' with 12% chance

who let this happen??