For years, we've treated LLMs like black boxes. We prompt them, check the answer, and if it looks right, we move on. But 'looking right' is not the same as being 'provably correct.' The shift toward verified AI is about transforming these systems from creative engines into reliable tools that provide mathematical guarantees about their behavior. It is the difference between a pilot saying, "I think we're flying at the right altitude," and a flight computer proving it via sensors and logic gates.

The Core Pillars of AI Verification

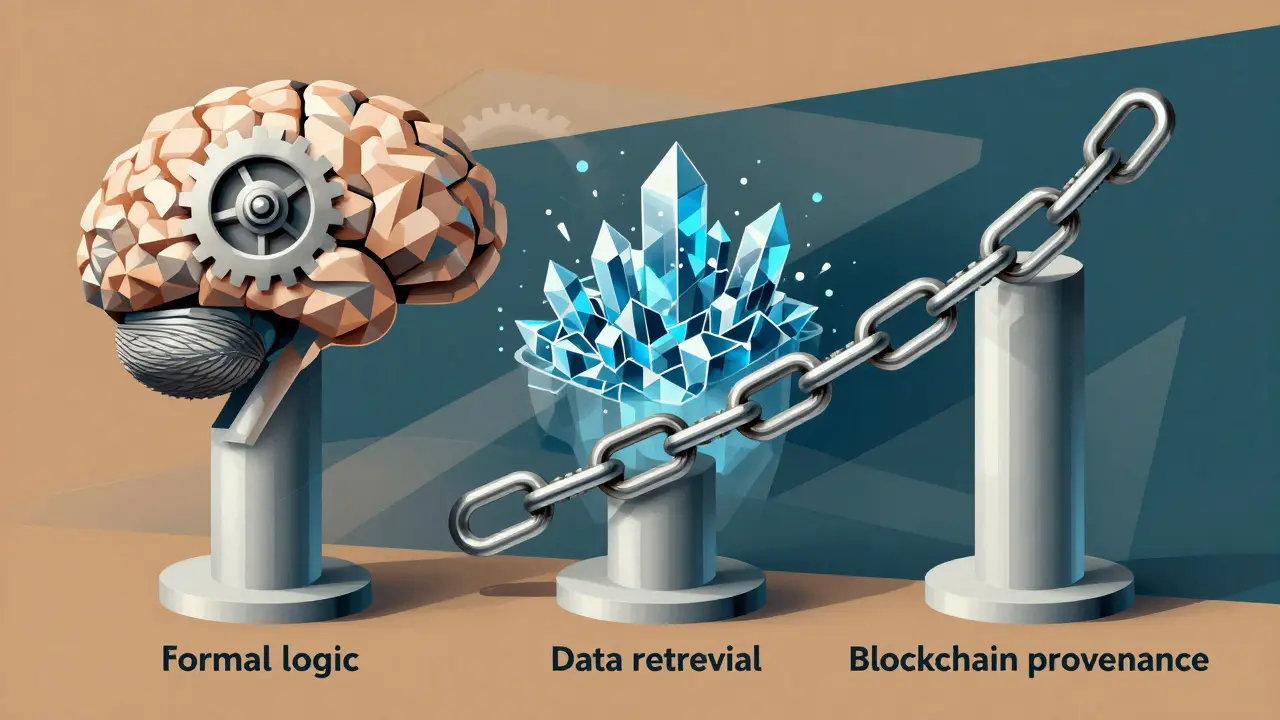

To move from a "best effort" output to a guaranteed one, verification systems generally rely on three primary mechanisms: formal methods, data-driven validation, and immutable auditing.

Formal Verification is the gold standard. Unlike traditional testing, where you run a thousand examples and hope for the best, formal verification uses mathematical logic to prove a system will always behave a certain way. Tools like Dafny and Kani allow developers to translate complex business rules into constraints. If the AI suggests a financial transaction that violates a regulatory rule, the formal verifier catches it not because it saw a similar error before, but because the transaction is mathematically impossible within the defined rules.

VerifAI represents the data-driven approach. This framework treats verification as a retrieval and reasoning problem. It uses an Indexer to pull relevant data from a data lake, a Reranker to prioritize the best evidence, and a Verifier to cross-reference the AI's claim against ground truth. This is particularly effective for structured data, such as tables or factual reports, where a clear "correct" answer exists in the source data.

Finally, we have immutable auditing. When an AI agent operates in a multi-stakeholder environment, you need a record that cannot be tampered with.

Numbers Protocol uses blockchain technology to create a provenance trail. By recording the origin and changes of a digital asset on a peer-to-peer network, it ensures that the verification process itself is transparent and auditable by any third party without needing to trust a single central authority.

Most current verification frameworks, including VerifAI, focus exclusively on data with ground truth. This creates a gap in our capabilities: we can verify the facts of an output, but we struggle to verify the nuance or intent. Furthermore, there is a persistent "symbolic gap." A system might be mathematically verified in a symbolic environment (like a piece of code), but when it interacts with the messy, unpredictable real world, the verification can break down. This is why high-stakes deployments still require a human-in-the-loop for the final sign-off.

An effective audit for a generative agent typically involves three steps:

Moreover, the skill set required is rare. You need people who understand both the probabilistic nature of neural networks and the rigid nature of symbolic logic. Practitioners often report spending six to twelve months studying formal methods before they can effectively apply them to generative AI. This talent gap is the primary reason why only about 17% of organizations with deployed AI have actually implemented formal verification.

As we move forward, the focus will shift toward real-time verification. Right now, most verification happens as a post-process (the AI generates, then the verifier checks). The next frontier is "constrained generation," where the AI is physically unable to produce an output that violates its mathematical boundaries in the first place. This would effectively eliminate hallucinations in mission-critical tasks.

Testing is probabilistic; it involves running a set of inputs and checking if the outputs are acceptable. Verification is deterministic; it uses mathematical proofs to guarantee that the system will always satisfy specific constraints, regardless of the input. It can stop hallucinations that violate defined constraints (like factual errors against a database), but it cannot stop "creative" hallucinations in subjective tasks where no ground truth exists. High-stakes sectors like financial services, healthcare, and government are the primary adopters because they face strict regulatory requirements and high costs associated with AI errors. Blockchain provides an immutable ledger of provenance. It doesn't check if the AI's logic is right, but it proves that the data used for verification wasn't tampered with after the fact. Yes, it requires specialized expertise in mathematical logic and significant time for specification mapping. This is why it is more common in large enterprises than in small startups.Constraints and the "Ground Truth" Problem

Verification isn't a magic wand; it has hard limits. The biggest hurdle is the distinction between objective and subjective data. If an AI agent is asked to summarize a legal contract, the verifier can check if the summary contradicts the contract's clauses (objective ground truth). However, if the agent is asked to evaluate if a marketing slogan is "inspiring," there is no mathematical proof for "inspiration."

Method

Mechanism

Primary Strength

Major Weakness

Formal Verification

Mathematical Logic

Provable Guarantees

Extremely High Complexity

Data-Driven (VerifAI)

RAG & Cross-referencing

High Factual Accuracy

Requires Ground Truth

Blockchain Provenance

Cryptography/Ledgers

Immutability & Trust

Doesn't Verify Content Logic

Traditional Testing

Probabilistic Sampling

Fast and Cheap

No Guarantees

The Audit Trail: From Compliance to Trust

In regulated industries, an audit isn't just a check-up; it's a legal requirement. The EU AI Act is a prime example, demanding that "high-risk" AI systems undergo strict conformity assessments. This means companies can't just say their AI is safe; they must provide a paper trail of how it was verified.

This level of scrutiny is why enterprise adoption is tiered. Large companies with over 1,000 employees are adopting these tools at three times the rate of small businesses. They have the budget to hire the specialized talent-formal verification experts-needed to bridge the gap between a prompt and a proof.

Implementation Hurdles and the Learning Curve

If you're thinking about implementing a verified AI pipeline, be prepared for a steep climb. This isn't something you can set up over a weekend. Translating a standard business rule (e.g., "Do not allow loans to users with a credit score below 600") into a mathematical constraint that a tool like Dafny can verify often takes cross-functional teams two to three weeks of dedicated effort.Looking Toward 2026 and Beyond

We are moving toward a future where the "verified" badge is as important as the "AI-powered" label. Industry analysts suggest that by the end of 2026, nearly 65% of enterprise deployments will include some form of formal verification. We will likely see a hybrid approach: blockchain for provenance, formal logic for safety constraints, and RAG-based verifiers for factual accuracy.What is the difference between AI testing and AI verification?

Can formal verification stop all AI hallucinations?

Which industries need this the most?

How does blockchain help in AI verification?

Is formal verification expensive to implement?

Vimal Kumar

April 12, 2026 AT 08:08This is a great breakdown of where the industry is headed. Using RAG for factual grounding is definitely the most accessible starting point for most teams right now.

Rohit Sen

April 13, 2026 AT 17:26Formal verification is just a fancy way of saying we're over-engineering a probabilistic system. It's cute that people think math can solve for "intent."

vidhi patel

April 13, 2026 AT 22:33The blatant lack of attention to consistent capitalization in the section discussing the EU AI Act is absolutely appalling. One must maintain a rigorous standard of orthography when discussing legal mandates.

Noel Dhiraj

April 14, 2026 AT 01:24love seeing the focus on the learning curve here man we just need more people to jump in and start playing with Dafny and kani its totally doable if you just dive in

Priti Yadav

April 14, 2026 AT 01:42Blockchain provenance? Please. That's just another layer for the corporations to hide who's actually training these models on our stolen data. They want a "paper trail" that they control.

Ajit Kumar

April 15, 2026 AT 21:15It is profoundly disheartening that we must rely on such complex mathematical architectures to ensure basic honesty in AI, yet we must acknowledge that the moral imperative to prevent harm in healthcare outweighs the mere inconvenience of a steep learning curve for developers who are far too accustomed to the path of least resistance in their coding practices.

Diwakar Pandey

April 17, 2026 AT 05:29Interesting perspective on the symbolic gap. It's kind of like how we can prove a theorem on a whiteboard but then the actual implementation crashes because of a memory leak.

Geet Ramchandani

April 17, 2026 AT 14:00This whole post is just a glorified marketing pitch for a few specific tools and it completely ignores the fact that most companies are too incompetent to even implement a basic RAG pipeline, let alone some high-level formal verification that will probably just break the moment a real-world edge case hits it because the people writing the constraints are just as clueless as the AI they're trying to fix.

Amit Umarani

April 18, 2026 AT 08:18Typo in the first paragraph.