Tag: prompt injection

Mar, 21 2026

Access Control and Authentication Patterns for LLM Services: Securing AI Applications Today

Secure your LLM services with proper authentication and access control patterns. Learn how to prevent prompt injection, use OAuth2 for agents, and implement ABAC for dynamic permissions in 2026.

- 10

- Read More

Mar, 5 2026

Incident Response Playbooks for LLM Security Breaches: How to Stop Prompt Injection, Data Leaks, and Harmful Outputs

LLM security breaches require specialized response plans. Learn how prompt injection, data leaks, and harmful outputs are handled with incident response playbooks built for AI systems - not traditional IT.

Dec, 22 2025

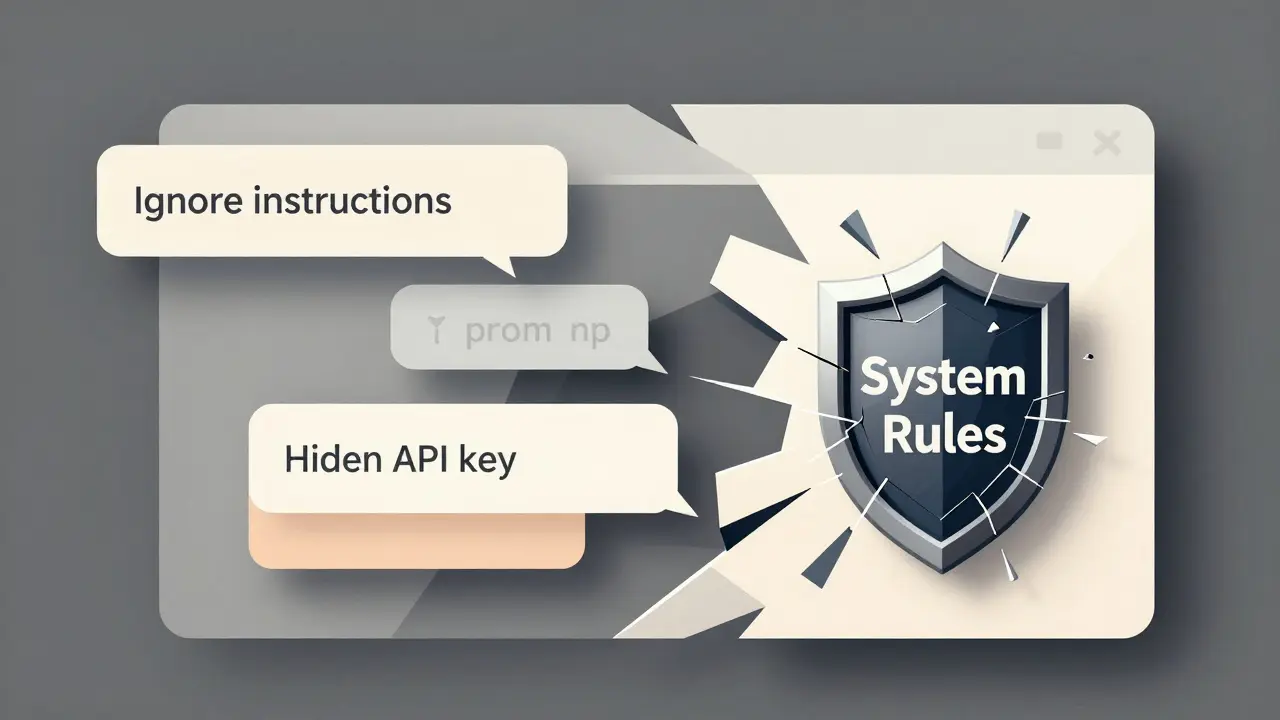

Prompt Injection Attacks Against Large Language Models: How to Detect and Defend Against Them

Prompt injection attacks manipulate AI systems by tricking them into ignoring instructions and revealing sensitive data. Learn how these attacks work, real-world examples, and proven defense strategies to protect your LLM applications.

- 10

- Read More