Tag: RAG

Grounded Generation with Structured Knowledge Bases for LLMs: How to Stop Hallucinations and Build Trust

Grounded generation with structured knowledge bases stops LLMs from making up facts. By connecting models to real data, companies cut hallucinations by 30-50% and build real trust. Here's how it works and why it's essential in 2026.

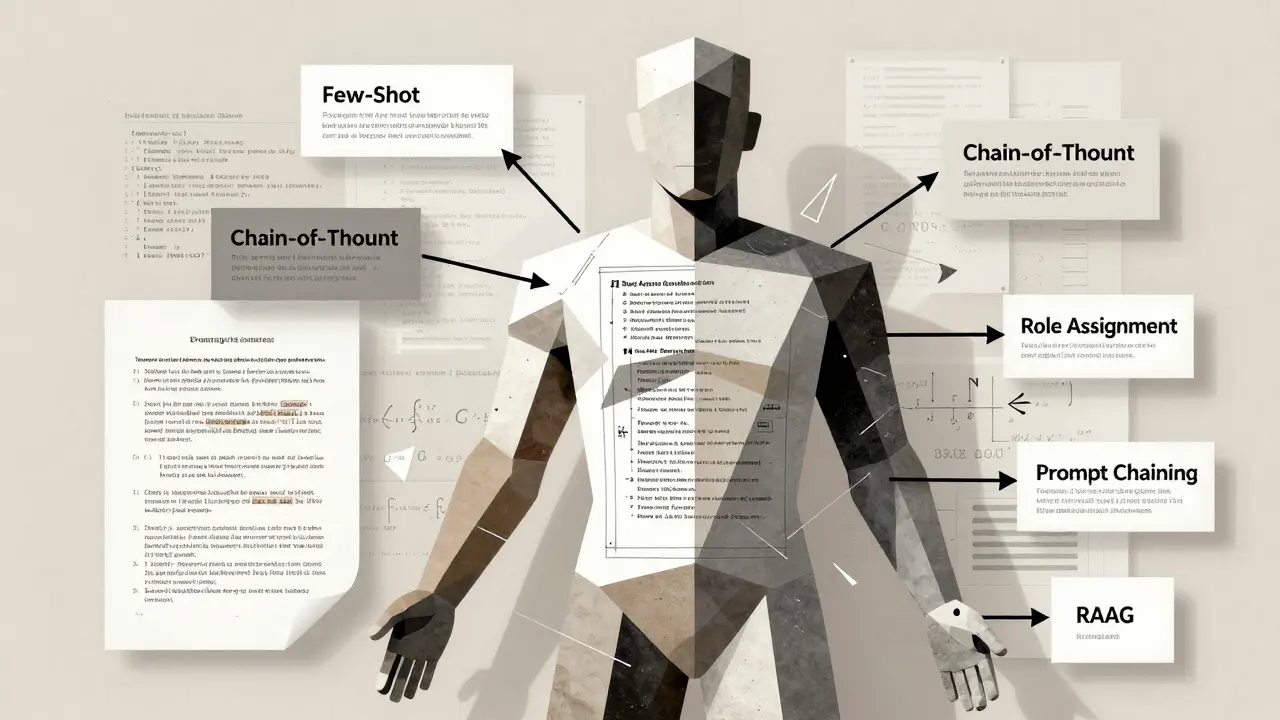

Prompt Engineering for Large Language Models: Key Principles and Proven Patterns

Learn the core principles and proven patterns of prompt engineering for large language models. Discover how few-shot, chain-of-thought, and RAG techniques improve AI output accuracy - and avoid common pitfalls that lead to vague or wrong answers.

- 10

- Read More

Retrieval-Augmented Generation Advances in Generative AI: Better Search, Better Answers

Retrieval-Augmented Generation (RAG) lets AI answer questions using live data instead of outdated training. It cuts hallucinations, updates instantly, and powers enterprise AI today. Learn how it works, where it shines, and what to avoid.

Hybrid Search for RAG: How Combining Keyword and Semantic Retrieval Boosts LLM Accuracy

Hybrid search combines keyword and semantic retrieval to fix the biggest flaws in RAG systems. It ensures LLMs get both exact terms and contextual meaning-critical for healthcare, legal, and developer tools.