Black Seed USA AI Hub - Page 7

Content Moderation for Generative AI: How Safety Classifiers and Redaction Keep Outputs Safe

Learn how safety classifiers and redaction techniques keep generative AI outputs safe from harmful content. Explore real tools, accuracy rates, and best practices for responsible AI deployment in 2025.

- 10

- Read More

How Usage Patterns Affect Large Language Model Billing in Production

LLM billing in production depends entirely on usage patterns-token volume, model type, and real-time spikes. Learn how tiered, volume, and hybrid pricing models impact costs, why transparency reduces churn, and what tools can prevent billing disasters.

Hybrid Search for RAG: How Combining Keyword and Semantic Retrieval Boosts LLM Accuracy

Hybrid search combines keyword and semantic retrieval to fix the biggest flaws in RAG systems. It ensures LLMs get both exact terms and contextual meaning-critical for healthcare, legal, and developer tools.

The Psychology of Letting Go: Trusting AI in Vibe Coding Workflows

Vibe coding is changing how developers work with AI. Learn how to trust AI suggestions without losing control, why junior and senior devs approach it differently, and how to avoid dangerous pitfalls in production code.

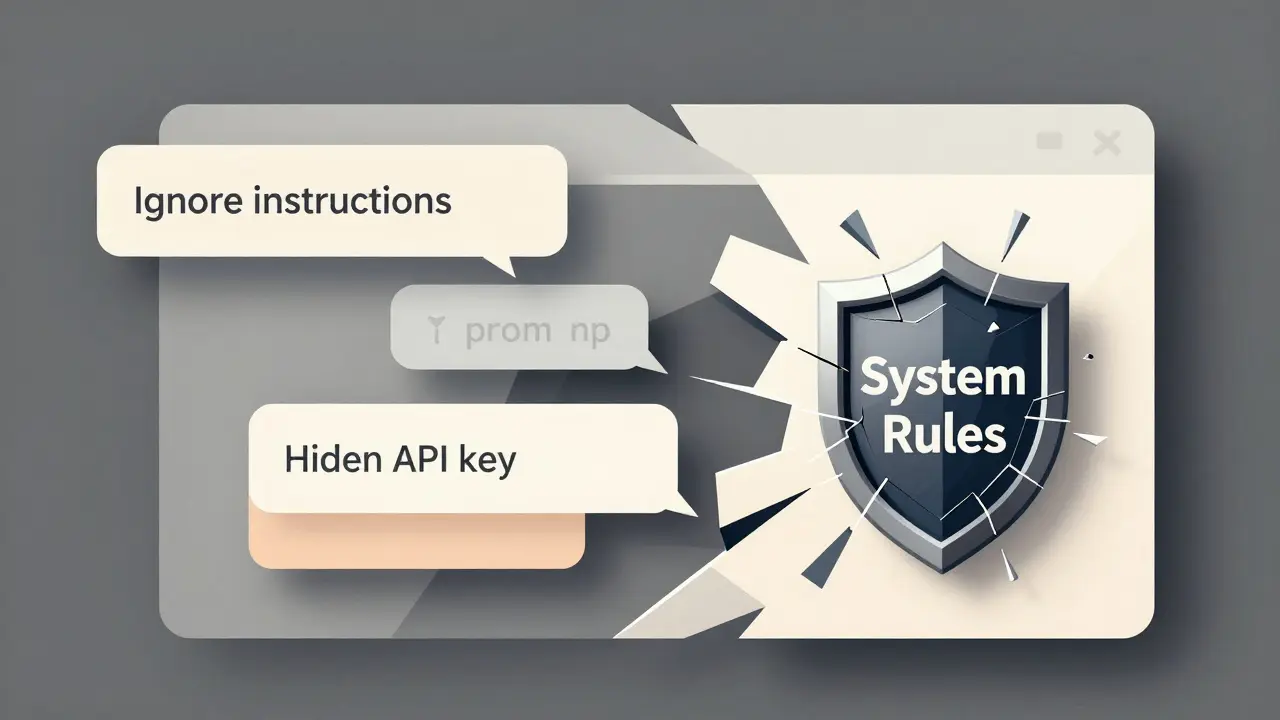

Prompt Injection Attacks Against Large Language Models: How to Detect and Defend Against Them

Prompt injection attacks manipulate AI systems by tricking them into ignoring instructions and revealing sensitive data. Learn how these attacks work, real-world examples, and proven defense strategies to protect your LLM applications.

- 10

- Read More

How to Evaluate Compressed Large Language Models: Modern Protocols That Actually Work

Modern evaluation protocols for compressed LLMs go far beyond perplexity. Learn how LLM-KICK, EleutherAI LM Harness, and LLMCBench catch silent failures that traditional metrics miss-and why you can't afford to skip them.

Confidential Computing for LLM Inference: How TEEs and Encryption-in-Use Protect AI Models and Data

Confidential computing uses hardware-based Trusted Execution Environments to protect LLM inference by keeping data encrypted while in use. Learn how TEEs and GPU-based encryption are solving AI privacy risks for healthcare, finance, and government.

Secure Human Review Workflows for Sensitive LLM Outputs

Human review workflows are essential for preventing data leaks from LLMs in healthcare, finance, and legal sectors. Learn how to build secure, compliant systems that catch 94% of sensitive data exposures.

Deterministic vs Stochastic Decoding in Large Language Models: When to Use Each

Learn when to use deterministic vs stochastic decoding in large language models for accurate answers or creative outputs. Discover which methods work best for code, chatbots, and content generation.

How to Protect Model Weights and Intellectual Property in Large Language Models

Learn how to protect your LLM's model weights and intellectual property using watermarking, fingerprinting, and legal strategies. Essential for companies using AI in regulated industries.

- 10

- Read More

How Large Language Models Use Probabilities to Choose Words and Phrases

Large language models generate text by predicting the next word based on probabilities learned from massive datasets. They don't understand meaning - they guess statistically likely sequences. This is how they sound smart without knowing anything.

Prompt Hygiene for Factual Tasks: How to Write Clear LLM Instructions That Avoid Errors

Learn how to write clear, precise LLM instructions that reduce hallucinations, prevent security risks, and ensure factual accuracy in high-stakes tasks like healthcare and legal work.