Tag: prompt engineering

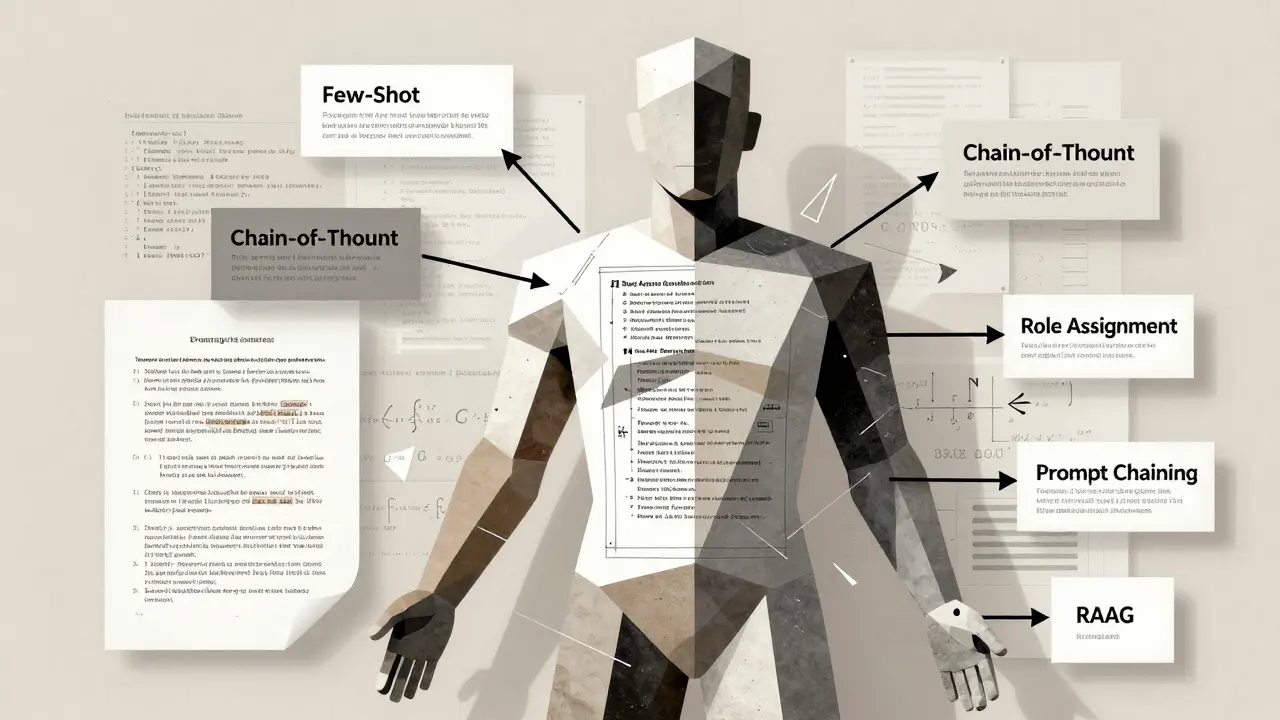

Prompt Engineering for Large Language Models: Key Principles and Proven Patterns

Learn the core principles and proven patterns of prompt engineering for large language models. Discover how few-shot, chain-of-thought, and RAG techniques improve AI output accuracy - and avoid common pitfalls that lead to vague or wrong answers.

- 10

- Read More

Schema-Constrained Prompts: How to Force Reliable JSON Output from LLMs

Schema-constrained prompts force LLMs to generate clean, valid JSON every time - eliminating parsing errors in production systems. Learn how it works, which tools to use, and when it’s worth the effort.

Few-Shot Prompting Patterns That Boost Accuracy in Large Language Models

Few-shot prompting improves LLM accuracy by 15-40% using just 2-8 examples. Learn the top patterns that work, where to apply them, and how to avoid common mistakes.

Context Layering for Vibe Coding: Feed the Model Before You Ask

Context layering transforms AI coding from hit-or-miss to reliable engineering. Learn how feeding structured, layered information before asking reduces errors, cuts hallucinations, and boosts success rates from 40% to 80%.

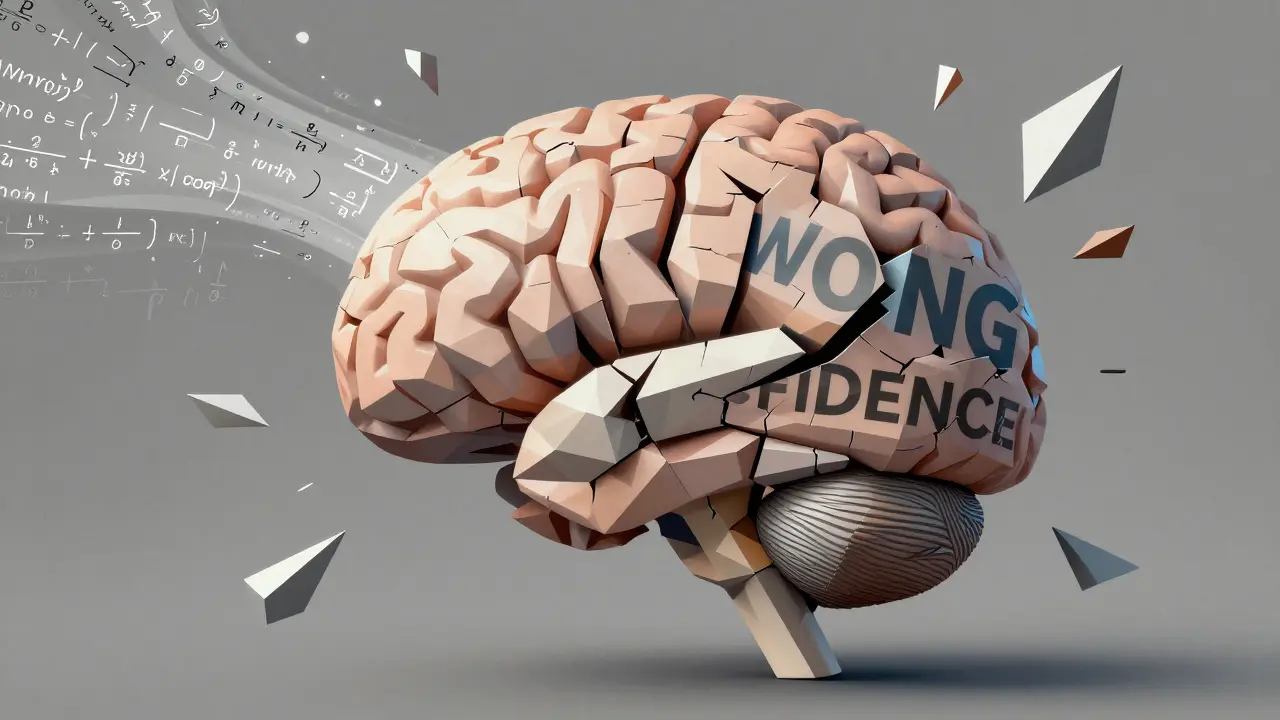

Error Messages and Feedback Prompts That Help LLMs Self-Correct

Learn how to use feedback prompts to help LLMs self-correct their own errors - when it works, when it fails, and how to implement it without falling into overconfidence traps.

Bias-Aware Prompt Engineering to Improve Fairness in Large Language Models

Bias-aware prompt engineering helps reduce unfair outputs in large language models by changing how you ask questions-not by retraining the model. Learn proven techniques, real results, and how to start today.

Style Transfer Prompts in Generative AI: Control Tone, Voice, and Format Like a Pro

Learn how to use style transfer prompts in generative AI to control tone, voice, and format-without losing meaning. Get practical steps, real-world examples, and pro tips for marketing and content teams.

- 10

- Read More

Prompt Hygiene for Factual Tasks: How to Write Clear LLM Instructions That Avoid Errors

Learn how to write clear, precise LLM instructions that reduce hallucinations, prevent security risks, and ensure factual accuracy in high-stakes tasks like healthcare and legal work.