Black Seed USA AI Hub - Page 4

Ethical Guidelines for Deploying Large Language Models in Regulated Domains

Ethical deployment of large language models in healthcare, finance, and justice requires more than generic AI guidelines. Learn the four core requirements, domain-specific rules, and real-world consequences of ignoring bias and accountability.

Energy Efficiency in Generative AI Training: How Sparsity, Pruning, and Low-Rank Methods Cut Power Use

Sparsity, pruning, and low-rank methods cut generative AI training energy by 30-80% without losing accuracy. Learn how these techniques work, why they matter, and how teams are using them today.

Retrieval-Augmented Generation Advances in Generative AI: Better Search, Better Answers

Retrieval-Augmented Generation (RAG) lets AI answer questions using live data instead of outdated training. It cuts hallucinations, updates instantly, and powers enterprise AI today. Learn how it works, where it shines, and what to avoid.

Interoperability Patterns to Abstract Large Language Model Providers

Interoperability patterns let you switch between LLM providers without breaking your app. Learn how LiteLLM, LangChain, and Model Context Protocol solve vendor lock-in, reduce costs, and improve reliability - with real-world examples and data.

Handing Off Vibe-Coded Prototypes to Engineering: What Documentation Actually Matters

Handing off vibe-coded prototypes to engineering teams fails without clear documentation. Learn the eight essential docs that turn fast AI prototypes into production-ready code-and why skipping them costs weeks of delay.

Evaluating Drift After Fine-Tuning: How to Monitor Large Language Model Stability

Drift after fine-tuning silently degrades LLM performance. Learn how to detect data, concept, and label drift using statistical methods, embedding analysis, and reward model tracking to maintain model accuracy and trust in production.

- 10

- Read More

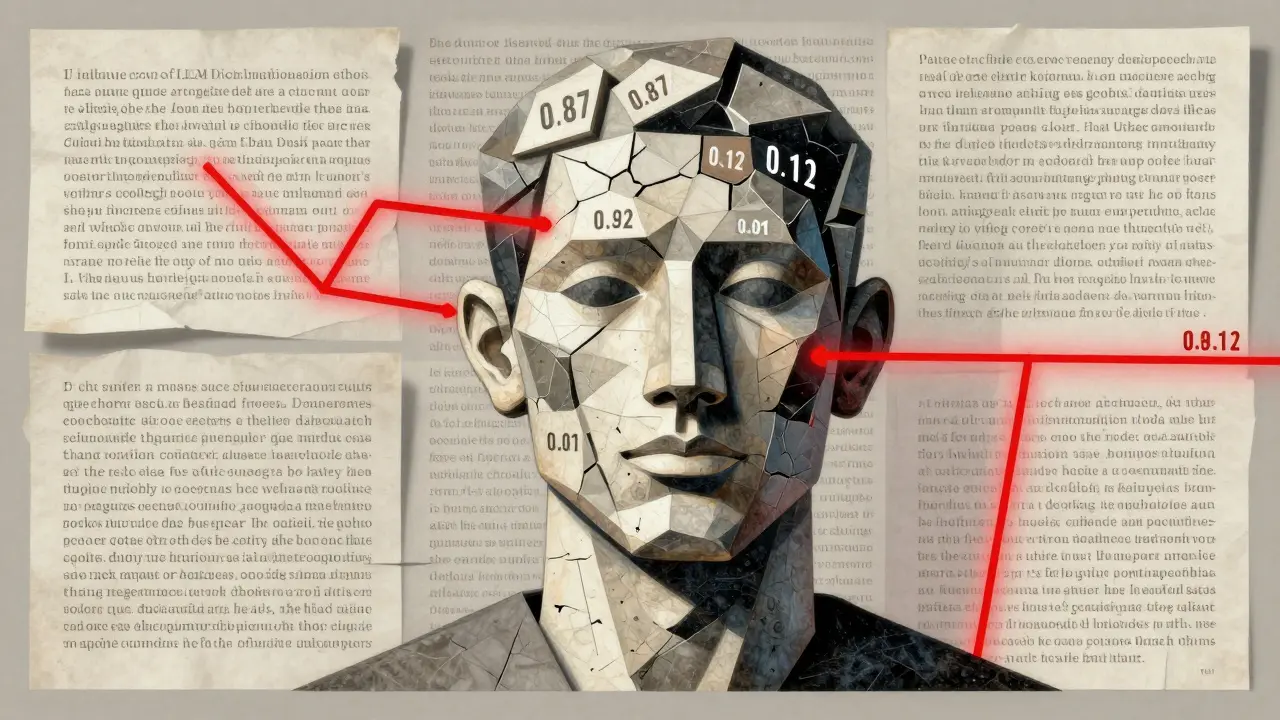

How Sampling Choices in LLMs Trigger Hallucinations and Affect Accuracy

Learn how sampling methods like temperature, top-k, and nucleus sampling directly impact LLM hallucinations. Discover the settings that reduce factual errors by up to 37% and how to apply them in real-world applications.

Cultural Sensitivity in Generative AI: How to Stop AI from Reinforcing Harmful Stereotypes

Generative AI often reinforces harmful stereotypes by reflecting biased training data. Learn how cultural insensitivity in AI leads to real-world harm - and what can be done to fix it.

Context Layering for Vibe Coding: Feed the Model Before You Ask

Context layering transforms AI coding from hit-or-miss to reliable engineering. Learn how feeding structured, layered information before asking reduces errors, cuts hallucinations, and boosts success rates from 40% to 80%.

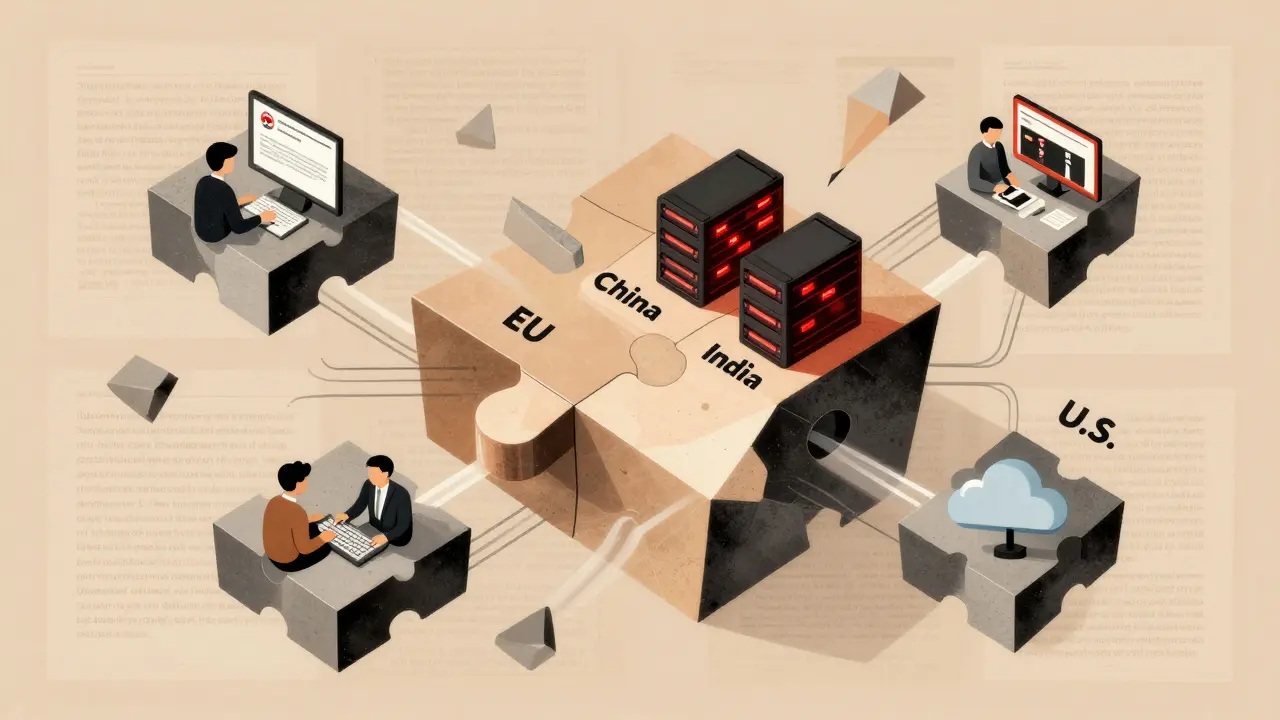

Compliance and Data Residency in LLM Deployments: Regional Controls

LLM deployments now face strict regional data laws that require splitting training data, model versions, and infrastructure by country. GDPR, PIPL, and DPDP force companies to build isolated systems-or risk massive fines.

LLM Compression vs Model Switching: A Practical Guide for 2026

Learn when to compress large language models versus switching to smaller ones for optimal performance and cost. Discover real-world examples, benchmarks, and expert tips for deploying efficient AI systems in 2026.

Supervised Fine-Tuning for LLMs: A Practical Guide for Practitioners

A practical guide to implementing supervised fine-tuning for large language models, covering data preparation, hyperparameters, common pitfalls, and real-world examples to customize AI models effectively.